Best Hardware for AI Image Generation and Stable Diffusion

Artificial Intelligence (AI) has revolutionized the field of image generation, enabling the creation of stunning visuals and realistic simulations. However, achieving these results demands robust hardware capable of handling immense computational loads effectively. In this guide, we'll discuss the top hardware options that can facilitate AI image generation, such as tasks in Stable Diffusion.

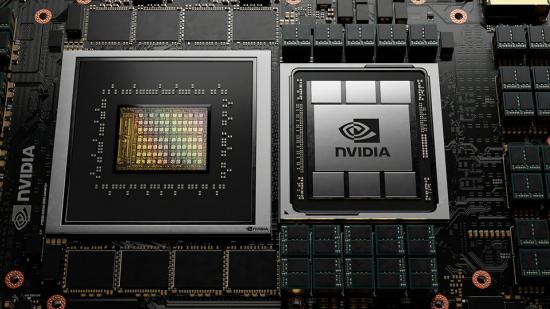

Graphics Processing Units (GPUs)

GPUs are fundamental components for AI image generation due to their parallel processing capabilities, making them essential for neural network training and inference.

GPUs are designed with a large number of cores that can handle multiple tasks simultaneously. This parallel processing power is essential for the complex calculations and operations required in AI, particularly in image generation tasks which often involve processing large amounts of data concurrently.

Neural Network Training and Inference:

Neural networks, a fundamental component of AI image generation, require significant computational power for training and inference. GPUs excel in optimizing the training phase by processing data in parallel, significantly reducing the time required to train deep learning models.

Tensor Operations and Tensor Cores:

Modern GPUs are equipped with dedicated hardware for tensor operations, which are fundamental to deep learning. Tensor Cores, a specialized component in some GPUs like NVIDIA's, accelerate matrix multiplications and convolutions, critical operations in neural networks, enhancing the overall performance of AI image generation tasks.

Complex Calculations for Image Generation:

AI image generation involves intricate mathematical computations, including convolutions, activations, and matrix multiplications. GPUs are highly efficient in handling these computations due to their architecture optimized for such mathematical operations, resulting in faster and smoother image generation.

Real-Time Processing:

GPUs can process vast amounts of image data in real-time, making them crucial for applications that require immediate responses, such as video processing, real-time image generation, and interactive graphics. This real-time capability is vital in AI applications where quick image generation is needed.

High Memory Bandwidth:

GPUs are designed with high memory bandwidth, allowing for rapid access to data and facilitating efficient data movement during image generation. This is particularly important for AI applications dealing with large datasets and complex models.

Optimized AI Frameworks and Libraries:

Major GPU manufacturers like NVIDIA, AMD, and others collaborate with AI framework developers to optimize popular frameworks such as TensorFlow, PyTorch, and CUDA for GPU utilization. These optimizations ensure seamless integration and efficient utilization of GPU resources for AI image generation.

Scalability and Flexibility:

GPUs offer scalability, enabling the creation of high-performance computing clusters for AI applications. Moreover, they come in a range of models with varying capabilities, allowing for flexibility in choosing the right GPU based on the specific requirements of the AI image generation project.

In summary, GPUs are instrumental in AI image generation due to their parallel processing power, specialized hardware for tensor operations, efficient handling of complex calculations, real-time processing capabilities, high memory bandwidth, and collaboration with optimized AI frameworks. They significantly enhance the speed and accuracy of AI image generation, making them an integral component of modern AI systems.

Here are some of the best Graphics Processing Units (GPUs) for AI image generation and stable diffusion:

NVIDIA GeForce RTX Series:

Renowned for its exceptional performance and dedicated Tensor Cores, these GPUs significantly accelerate AI workloads and image generation tasks.

NVIDIA Quadro Series:

Designed for professional use, Quadro GPUs offer enhanced stability, large memory capacities, and optimized drivers, making them ideal for demanding AI image generation projects.

NVIDIA Tesla V100:

This high-end GPU is a powerhouse, providing immense processing power and memory bandwidth, making it perfect for large-scale AI image generation and diffusion tasks.

_____

Central Processing Units (CPUs)

CPUs play a crucial role in handling various pre-processing and post-processing tasks, including data augmentation, feature extraction, and more.

CPUs are the core processing units of a computer and are designed to handle a wide range of general-purpose tasks. In the context of AI image generation, CPUs play a vital role in managing overall system operations, coordinating data flow, and executing various instructions.

Pre-processing and Post-processing:

CPUs are instrumental in pre-processing tasks such as data augmentation, image normalization, and feature extraction. After AI image generation, CPUs handle post-processing tasks like image resizing, compression, and converting generated data into usable formats.

Control and Decision Making:

CPUs manage control flow within AI systems. They make decisions based on algorithms, direct the flow of data between various components, and handle tasks such as model selection, adjusting hyperparameters, and managing different stages of the image generation process.

Complex Algorithms and Sequential Tasks:

While GPUs excel in parallel processing, CPUs are proficient in executing complex algorithms and sequential tasks. In AI image generation, certain algorithms and processes may be more efficiently handled by the CPU due to its ability to manage complex logic and decision-making.

Resource Allocation and Task Scheduling:

CPUs play a critical role in managing system resources and scheduling tasks. They allocate resources to different components of the AI image generation system, ensuring optimal usage of available computational power and memory.

Integration and Connectivity:

CPUs facilitate the integration of various hardware components within a system, ensuring smooth communication and operation between the GPU, memory, storage, and other peripherals. They handle input/output operations, which are crucial in AI image generation for reading and writing data.

Multi-Core Performance:

Modern CPUs often come with multiple cores, enabling parallel execution of tasks. This multi-core architecture allows CPUs to handle several threads simultaneously, enhancing the overall performance and efficiency, especially in multi-threaded AI image generation applications.

Compatibility and Versatility:

CPUs are highly compatible with a wide range of software and applications. This versatility ensures that AI image generation frameworks and libraries can run seamlessly on CPU-based systems, providing a broad base for AI development and deployment.

In summary, CPUs are fundamental in managing general-purpose processing, pre-processing, post-processing, control, decision-making, complex algorithms, resource allocation, task scheduling, integration, and connectivity in AI image generation. While GPUs excel in parallel processing, CPUs complement them by efficiently handling tasks that require sequential processing and complex decision-making capabilities. Both components are vital in creating a balanced and efficient AI image generation system.

Best CPUs for AI & Machine Learning

Intel Xeon Scalable Series:

These CPUs offer robust performance and scalability, making them suitable for processing heavy workloads involved in AI image generation.

AMD Ryzen Threadripper Series:

Known for their multi-core performance and affordability, these CPUs are an excellent choice for AI image generation, especially when a balance between performance and cost is desired.

_____

Memory (RAM)

Adequate RAM is essential to ensure smooth processing and efficient handling of large datasets during AI image generation.

64GB-128GB DDR4 RAM: Having sufficient RAM allows for faster access to data, reducing processing time and enhancing the overall efficiency of the image generation process.

Data Access Speed:

RAM (Random Access Memory) provides high-speed access to data for the CPU and GPU. In AI image generation, quick access to the required data is crucial for efficient processing, making RAM an essential component.

Temporary Storage of Data:

AI image generation often involves manipulating large datasets, model parameters, and intermediate results. RAM serves as a temporary storage space for this data, allowing for swift retrieval and modification, which is critical for real-time image generation.

Storing Model Parameters and Intermediate Results:

During the training and inference phases of AI image generation models, model parameters and intermediate results need to be stored and accessed frequently. RAM provides a high-speed storage solution for these elements, facilitating faster computation and optimization of the model.

Batch Processing and Parallelism:

AI image generation commonly involves batch processing, where multiple images or samples are processed simultaneously. RAM plays a significant role in storing and managing these batches, enabling efficient parallel processing across the AI model.

Efficient Algorithm Execution:

Many AI algorithms, including those used in image generation, require rapid access to data structures like matrices and tensors. RAM allows for efficient storage and retrieval of these data structures, optimizing the execution of algorithms and improving the overall performance of the image generation process.

Optimized Training and Inference:

During the training and inference phases, AI models iteratively access and update parameters based on the input data. RAM's high-speed access facilitates these frequent updates, aiding in faster convergence during training and efficient inference during image generation.

Supporting Complex Model Architectures:

Modern AI models for image generation, such as Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs), often have intricate architectures and numerous layers. RAM provides the necessary memory to store and manage these complex structures, enabling the effective training and operation of the models.

Large Memory Configurations for Big Data:

For AI applications dealing with enormous datasets, having a significant amount of RAM is essential. Large memory configurations allow for efficient handling of big data, enhancing the AI image generation process, particularly when working with high-resolution images or extensive datasets.

In summary, RAM is a critical component for AI image generation, supporting high-speed data access, temporary storage of data, storing model parameters and intermediate results, enabling batch processing, facilitating efficient algorithm execution, optimizing training and inference, supporting complex model architectures, and accommodating large memory configurations. Having an adequate and well-optimized RAM configuration is fundamental to achieving optimal performance in AI image generation tasks.

_____

Storage

Fast and ample storage is crucial for storing large datasets and model parameters, ensuring quick access and retrieval.

NVMe SSDs (Solid State Drives): These provide high-speed storage, significantly reducing data access times and enhancing the speed of data retrieval, which is essential for AI image generation projects.

Data Storage and Retrieval:

Storage is fundamental for storing the vast amounts of data required for AI image generation. This includes training data, validation sets, pre-trained models, and generated images. Efficient storage systems ensure quick and reliable access to this data, which is critical for training and refining AI models.

Model Parameters and Checkpoints:

During the training phase, AI models continuously update their parameters. Storage plays a vital role in storing these parameters and checkpoints, allowing for model retraining, fine-tuning, and ensuring that progress is not lost in the event of interruptions or failures.

Large-Scale Datasets and High-Resolution Images:

AI image generation often involves working with large-scale datasets and high-resolution images. Adequate storage capacity is necessary to accommodate these large files, ensuring that all the data needed for training and generation is accessible and organized efficiently.

Redundancy and Data Protection:

Storage solutions often incorporate redundancy and backup mechanisms to protect against data loss. This is crucial for preserving valuable training data, model parameters, and generated images, ensuring the integrity of the AI image generation process.

Sequential Access and Data Streaming:

Certain AI image generation tasks may require sequential access to a vast amount of data, especially when processing videos or image sequences. Storage systems optimized for sequential access and data streaming are essential for achieving optimal performance in these scenarios.

Efficient Input and Output Operations:

The speed and efficiency of reading and writing data from and to storage significantly impact AI image generation performance. High-speed storage solutions, such as SSDs (Solid State Drives) or NVMe drives, facilitate faster input/output operations, leading to smoother and faster image generation.

Scalability for Growing Data Volumes:

As AI image generation projects evolve, the amount of data generated and used for training also increases. Storage solutions should be scalable, allowing for seamless expansion to accommodate the growing data volumes associated with more extensive datasets and improved AI models.

Data Preprocessing and Caching:

Storage is used to store preprocessed data and cache intermediate results, optimizing the image generation process. This allows for faster access to preprocessed data, reducing computational load and enhancing the efficiency of AI algorithms.

In summary, storage is a critical component in AI image generation, supporting data storage and retrieval, model parameters and checkpoints, large-scale datasets, redundancy and data protection, sequential access and data streaming, efficient input/output operations, scalability for growing data volumes, and data preprocessing and caching. A well-designed storage infrastructure is essential for optimizing AI image generation, ensuring data accessibility, reliability, and performance throughout the image generation process.

_____

Dedicated AI Accelerators

Specialized hardware accelerators designed for AI workloads can significantly boost image generation performance.

Google Cloud Tensor Processing Units (TPUs): TPUs are optimized for AI tasks and deliver exceptional performance for neural network inference and training.

NVIDIA Deep Learning Accelerators (DLA): These are efficient hardware accelerators designed to accelerate AI workloads, including image generation, by offloading computations from the CPU or GPU.

Specialized Hardware for AI Workloads:

Dedicated AI accelerators are purpose-built hardware designed to accelerate AI workloads, including image generation. They offer optimized architectures and components tailored to efficiently perform the computations required in AI tasks.

Tensor Processing Units (TPUs):

TPUs are a prominent example of dedicated AI accelerators. They are designed to accelerate tensor-based operations, which are fundamental to neural network computations. TPUs excel in speeding up matrix multiplications and convolutions, key operations in deep learning models used for AI image generation.

Efficient Inference Processing:

Dedicated AI accelerators are highly efficient in executing inference tasks, allowing for real-time or near-real-time processing of AI-generated images. Their specialized design ensures low-latency processing, making them ideal for applications requiring quick image generation and diffusion.

Model Parallelism and Scalability:

AI accelerators can handle model parallelism efficiently, enabling the division of a large model into smaller parts for simultaneous processing. This scalability ensures that even very large AI image generation models can be effectively processed without compromising performance.

Reduced Power Consumption and Heat Generation:

Dedicated AI accelerators are often more power-efficient compared to general-purpose processors like CPUs or GPUs. They are designed to perform AI computations with reduced power consumption and heat generation, making them suitable for deployment in power-sensitive environments.

Optimized for AI Frameworks and Libraries:

AI accelerators are optimized to work seamlessly with popular AI frameworks and libraries such as TensorFlow, PyTorch, and others. This integration ensures that AI image generation models can fully leverage the capabilities of the accelerator for improved performance and efficiency.

Customized Hardware Architectures:

These accelerators often feature customized hardware architectures, such as systolic arrays, which are specifically designed to accelerate matrix operations prevalent in AI computations. This specialized design further enhances their efficiency and performance for image generation tasks.

Cloud-Based AI Accelerator Services:

Cloud service providers offer AI accelerator services, allowing users to access and utilize these accelerators remotely. This provides the advantage of scalability and cost-effectiveness, as users can leverage the power of dedicated AI accelerators without the need for physical hardware.

In summary, dedicated AI accelerators play a pivotal role in AI image generation by offering specialized hardware optimized for AI workloads, efficient inference processing, model parallelism, reduced power consumption, compatibility with popular AI frameworks, customized hardware architectures, and cloud-based services. Leveraging these accelerators significantly enhances the efficiency and performance of AI image generation processes, enabling the creation of high-quality images in a timely and resource-efficient manner.

_____

Conclusion

Selecting the right hardware for AI image generation and stable diffusion is crucial to achieving high-quality results efficiently. GPUs, CPUs, adequate memory, fast storage, and dedicated AI accelerators are all integral components that contribute to the success of any AI image generation project. Consider your specific requirements and budget to choose the hardware that best aligns with your needs, enabling you to create stunning AI-generated images effectively and reliably.

Subscribe to the AI Search Newsletter

Get top updates in AI to your inbox every weekend. It's free!