3D CoCa: Contrastive Learners are 3D Captioners

Ting Huang, Zeyu Zhang, Yemin Wang, Hao Tang

2025-04-15

Summary

This paper talks about 3D CoCa, a new AI framework that can look at 3D scenes and describe them in natural language. It combines two main ideas: teaching the AI to match 3D shapes with words (contrastive learning) and generating detailed captions for what it sees in 3D environments.

What's the problem?

The problem is that describing 3D scenes is much harder than describing regular 2D images because 3D data is often sparse and not well matched with language. Most older methods either miss important details or need extra steps, like using separate object detectors, which makes the process less accurate and more complicated.

What's the solution?

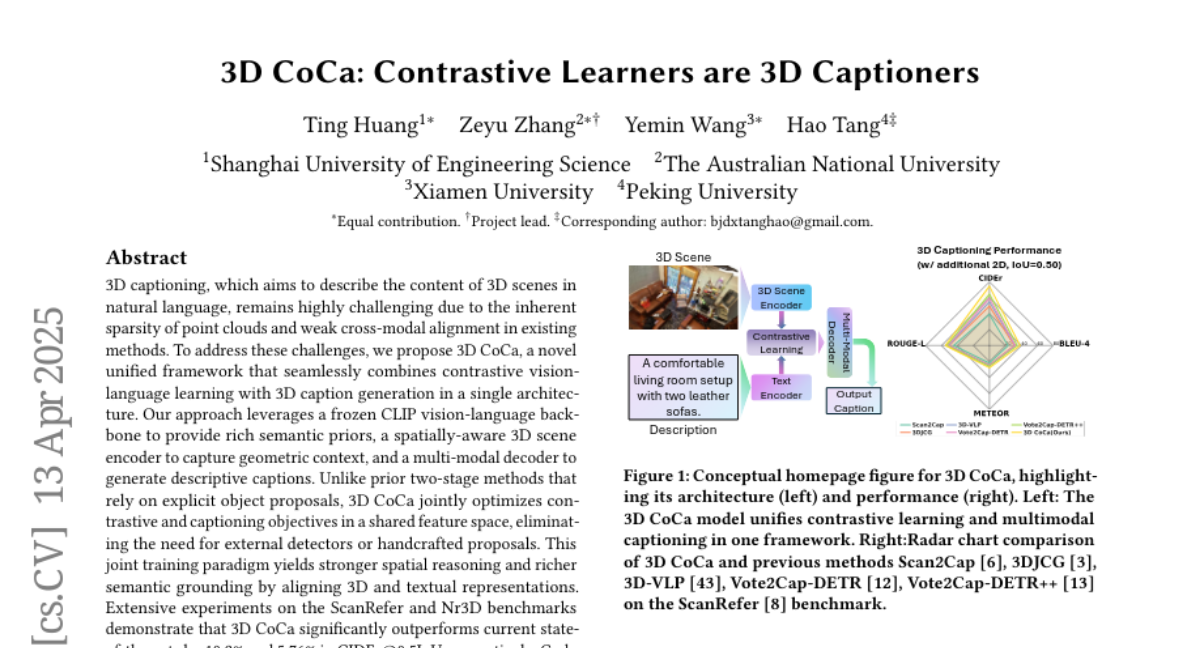

The researchers created 3D CoCa by training a model that learns to connect 3D point clouds and text at the same time, using a shared learning goal. They use a strong vision-language backbone (CLIP) to bring in knowledge from large amounts of image and text data, and build a special 3D scene encoder that understands the shape and layout of objects. The model then uses a multi-modal decoder to write captions that not only name objects but also describe where they are and how they relate to each other in space. This approach skips the need for separate object detectors and makes the captions more accurate and meaningful.

Why it matters?

This work matters because it makes it possible for AI to better understand and describe complex 3D scenes, which is useful for things like robotics, virtual reality, and helping visually impaired people understand their surroundings. By improving how AI connects 3D shapes with language, 3D CoCa sets a new standard for both research and real-world applications.

Abstract

3D CoCa is a unified framework combining contrastive vision-language learning with 3D caption generation, using a CLIP backbone, spatially-aware 3D scene encoder, and multi-modal decoder for improved spatial reasoning and semantic grounding.