3D Gaussian Editing with A Single Image

Guan Luo, Tian-Xing Xu, Ying-Tian Liu, Xiao-Xiong Fan, Fang-Lue Zhang, Song-Hai Zhang

2024-08-15

Summary

This paper presents a new method for editing 3D scenes using just a single image, making it easier to manipulate and create realistic 3D environments.

What's the problem?

Editing 3D scenes often requires complex models that accurately represent the scene's geometry, which can be difficult and time-consuming to create. Many existing methods rely on detailed 3D meshes, which limits their usefulness for quick edits or for users who may not have the technical skills to work with complex models.

What's the solution?

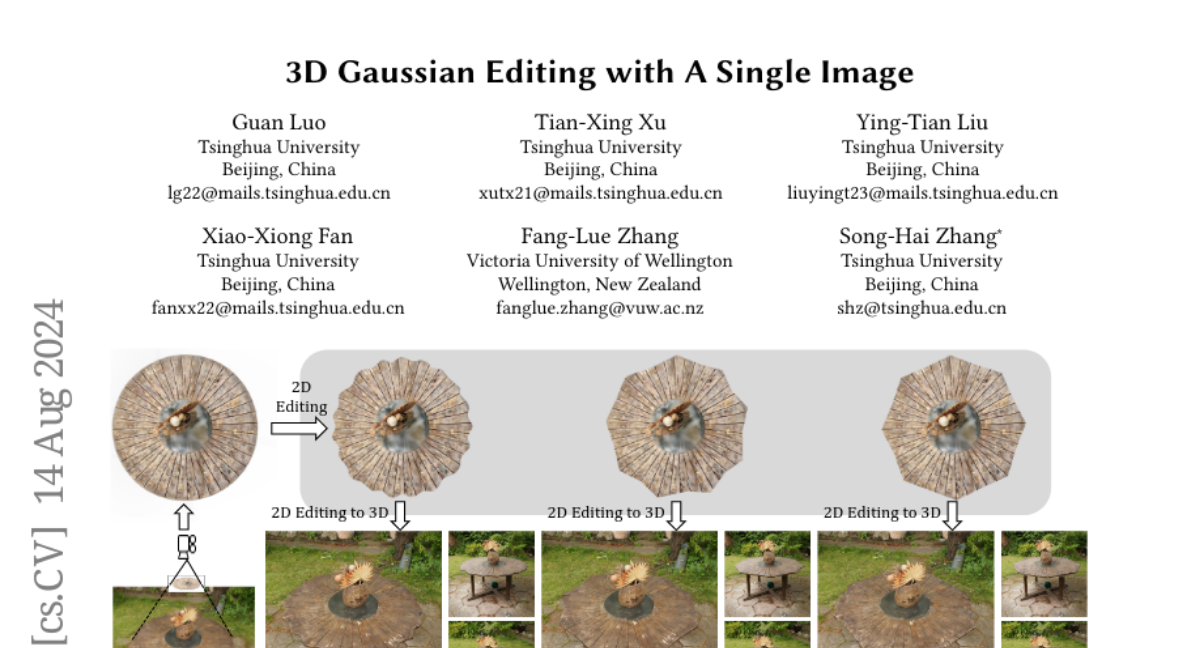

The authors introduce a technique called 3D Gaussian Splatting that allows users to edit 3D scenes by simply modifying a 2D image. Their method optimizes the 3D representation based on the changes made to the image, enabling intuitive manipulation without needing detailed meshes. They also incorporate strategies to handle long-range object deformations and occlusions, ensuring that the edited scenes look realistic and maintain structural integrity.

Why it matters?

This research is significant because it simplifies the process of editing 3D scenes, making it accessible to more people, including artists and designers who may not have advanced technical skills. By allowing edits through a single image, it opens up new possibilities for creating and modifying 3D content in various applications, such as video games, virtual reality, and film production.

Abstract

The modeling and manipulation of 3D scenes captured from the real world are pivotal in various applications, attracting growing research interest. While previous works on editing have achieved interesting results through manipulating 3D meshes, they often require accurately reconstructed meshes to perform editing, which limits their application in 3D content generation. To address this gap, we introduce a novel single-image-driven 3D scene editing approach based on 3D Gaussian Splatting, enabling intuitive manipulation via directly editing the content on a 2D image plane. Our method learns to optimize the 3D Gaussians to align with an edited version of the image rendered from a user-specified viewpoint of the original scene. To capture long-range object deformation, we introduce positional loss into the optimization process of 3D Gaussian Splatting and enable gradient propagation through reparameterization. To handle occluded 3D Gaussians when rendering from the specified viewpoint, we build an anchor-based structure and employ a coarse-to-fine optimization strategy capable of handling long-range deformation while maintaining structural stability. Furthermore, we design a novel masking strategy to adaptively identify non-rigid deformation regions for fine-scale modeling. Extensive experiments show the effectiveness of our method in handling geometric details, long-range, and non-rigid deformation, demonstrating superior editing flexibility and quality compared to previous approaches.