3DV-TON: Textured 3D-Guided Consistent Video Try-on via Diffusion Models

Min Wei, Chaohui Yu, Jingkai Zhou, Fan Wang

2025-04-25

Summary

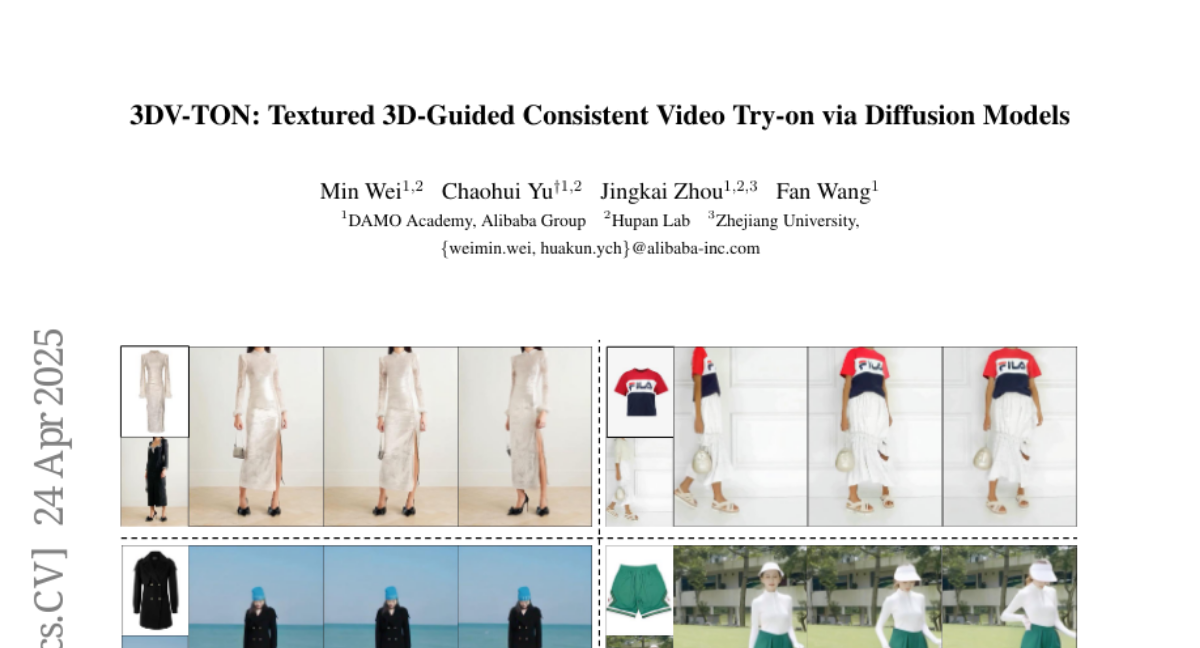

This paper talks about 3DV-TON, a new AI system that lets you see what clothes would look like on a moving person in a video, making sure the clothes fit and move realistically from every angle.

What's the problem?

The problem is that most virtual try-on tools can only show how clothes look on a single photo or struggle to keep the outfit looking natural and consistent when the person moves around in a video. This makes it hard to get a true sense of how the clothes would actually look in real life.

What's the solution?

The researchers used a technique called diffusion models along with detailed 3D models of people and clothes. By guiding the AI with these 3D meshes for every frame of the video, they made sure the clothes stay in place and look good as the person moves, resulting in smooth and realistic video try-ons.

Why it matters?

This matters because it makes online shopping and fashion previews much more realistic and helpful, letting people see exactly how clothes would look on them in motion before buying, which can improve confidence in purchases and reduce returns.

Abstract

A diffusion-based framework generates high-fidelity and temporally consistent video try-on by using animatable textured 3D meshes as frame-level guidance.