4D-Bench: Benchmarking Multi-modal Large Language Models for 4D Object Understanding

Wenxuan Zhu, Bing Li, Cheng Zheng, Jinjie Mai, Jun Chen, Letian Jiang, Abdullah Hamdi, Sara Rojas Martinez, Chia-Wen Lin, Mohamed Elhoseiny, Bernard Ghanem

2025-03-31

Summary

This paper introduces a new way to test how well AI can understand 4D objects, which are 3D objects that change over time.

What's the problem?

Current AI models are good at understanding 2D images and videos, but there's no standard way to check if they can understand 4D objects.

What's the solution?

The researchers created a new benchmark called 4D-Bench that includes tasks like answering questions about 4D objects and describing them in words.

Why it matters?

This work matters because it helps us understand the limitations of current AI models and guides future research in 4D object understanding.

Abstract

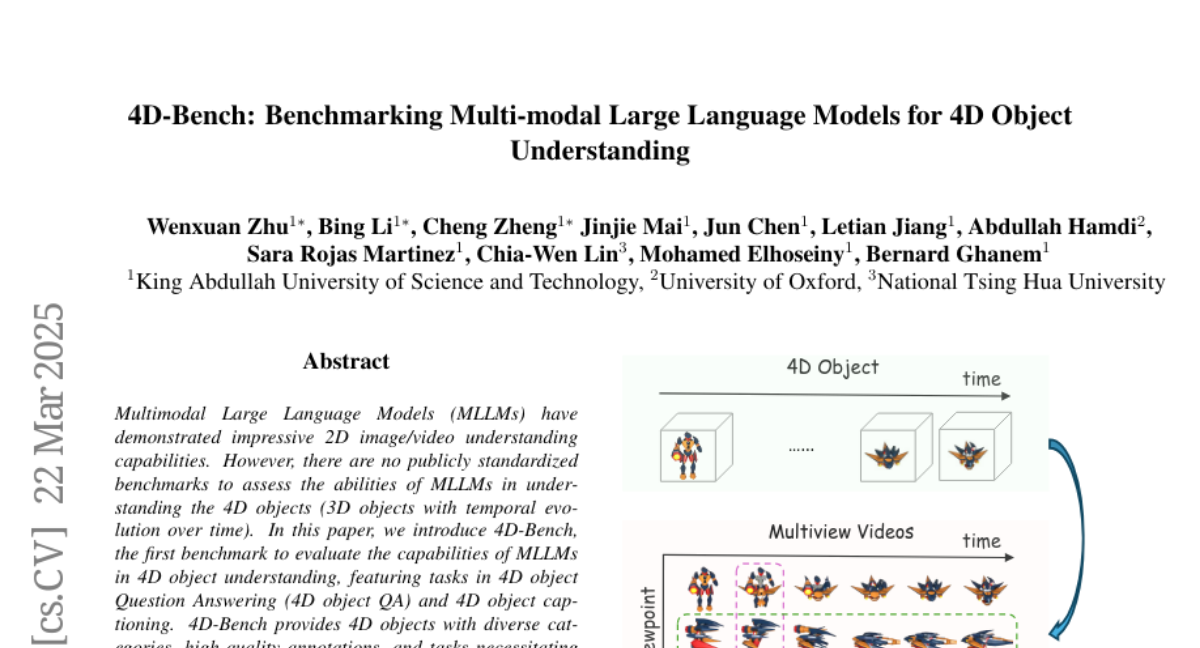

Multimodal Large Language Models (MLLMs) have demonstrated impressive 2D image/video understanding capabilities. However, there are no publicly standardized benchmarks to assess the abilities of MLLMs in understanding the 4D objects (3D objects with temporal evolution over time). In this paper, we introduce 4D-Bench, the first benchmark to evaluate the capabilities of MLLMs in 4D object understanding, featuring tasks in 4D object Question Answering (4D object QA) and 4D object captioning. 4D-Bench provides 4D objects with diverse categories, high-quality annotations, and tasks necessitating multi-view spatial-temporal understanding, different from existing 2D image/video-based benchmarks. With 4D-Bench, we evaluate a wide range of open-source and closed-source MLLMs. The results from the 4D object captioning experiment indicate that MLLMs generally exhibit weaker temporal understanding compared to their appearance understanding, notably, while open-source models approach closed-source performance in appearance understanding, they show larger performance gaps in temporal understanding. 4D object QA yields surprising findings: even with simple single-object videos, MLLMs perform poorly, with state-of-the-art GPT-4o achieving only 63\% accuracy compared to the human baseline of 91\%. These findings highlight a substantial gap in 4D object understanding and the need for further advancements in MLLMs.