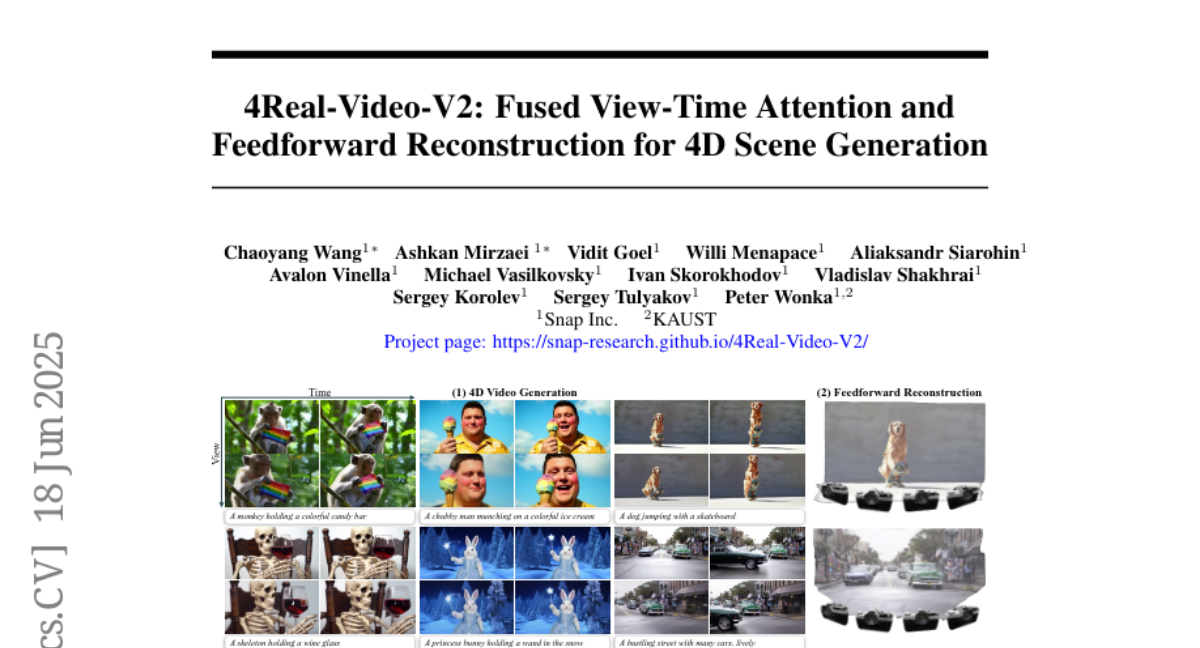

4Real-Video-V2: Fused View-Time Attention and Feedforward Reconstruction for 4D Scene Generation

Chaoyang Wang, Ashkan Mirzaei, Vidit Goel, Willi Menapace, Aliaksandr Siarohin, Avalon Vinella, Michael Vasilkovsky, Ivan Skorokhodov, Vladislav Shakhrai, Sergey Korolev, Sergey Tulyakov, Peter Wonka

2025-06-24

Summary

This paper talks about 4Real-Video-V2, a framework that creates detailed 4D scenes by combining video modeling and 3D reconstruction in one system using special attention techniques.

What's the problem?

The problem is that generating high-quality 4D videos, which include time and 3D space, is hard because previous methods struggled to balance good visuals with accurate 3D shapes.

What's the solution?

The researchers designed a unified architecture that uses fused view-time attention to efficiently focus on important parts of the scene over time and space, along with a feedforward reconstruction method to create better 3D shapes and video quality.

Why it matters?

This matters because it helps produce more realistic and accurate 4D videos, improving applications in virtual reality, movies, and simulations where seeing moving 3D scenes clearly is important.

Abstract

A new framework combines 4D video modeling and 3D reconstruction using a unified architecture with sparse attention patterns, achieving superior visual quality and reconstruction.