A Multi-Dimensional Constraint Framework for Evaluating and Improving Instruction Following in Large Language Models

Junjie Ye, Caishuang Huang, Zhuohan Chen, Wenjie Fu, Chenyuan Yang, Leyi Yang, Yilong Wu, Peng Wang, Meng Zhou, Xiaolong Yang, Tao Gui, Qi Zhang, Zhongchao Shi, Jianping Fan, Xuanjing Huang

2025-05-14

Summary

This paper talks about a new system for testing and improving how well large language models can follow different types of instructions, using a method that checks their answers automatically with code.

What's the problem?

The problem is that language models don't always follow instructions perfectly, especially when the instructions are complicated or have multiple parts. It's also hard to measure exactly where they mess up and how to help them get better.

What's the solution?

The researchers created an automated process that generates lots of different instruction-following challenges and uses code to check if the AI's answers are correct. By analyzing how the models perform on these tests, they used reinforcement learning to train the models to follow instructions more accurately across a wider range of situations.

Why it matters?

This matters because it helps make language models more reliable and trustworthy when they're asked to do complicated tasks, which is important for using AI in education, business, and everyday life.

Abstract

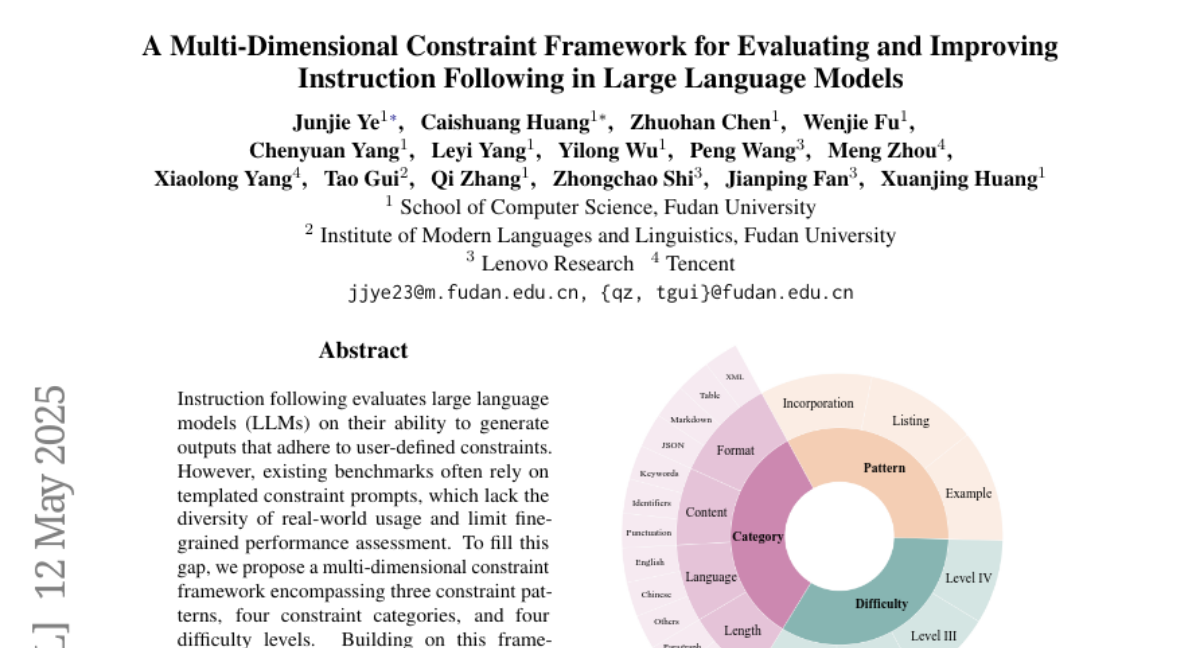

An automated pipeline generates diverse, code-verifiable instruction-following test samples, revealing performance variation across constraints in large language models and enhancing instruction following via reinforcement learning.