A Strategic Coordination Framework of Small LLMs Matches Large LLMs in Data Synthesis

Xin Gao, Qizhi Pei, Zinan Tang, Yu Li, Honglin Lin, Jiang Wu, Conghui He, Lijun Wu

2025-04-18

Summary

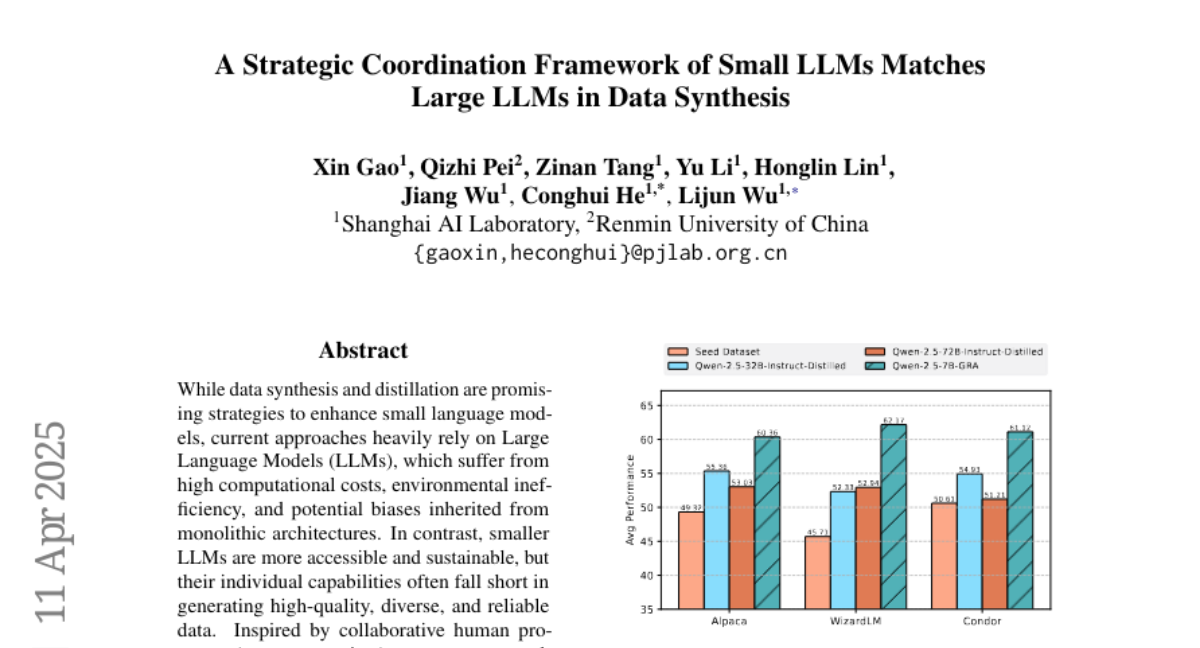

This paper talks about a new system where several small language models work together in a smart way to create high-quality data, reaching results that are just as good as what much larger and more expensive models can do.

What's the problem?

The problem is that big language models are very powerful at generating new data, but they require a lot of computer power and are expensive to use. Smaller models are cheaper and easier to run, but by themselves, they usually can't match the quality of the big ones.

What's the solution?

The researchers designed a framework where many small models collaborate and check each other's work, kind of like a peer-review process. By coordinating their efforts and sharing feedback, these small models are able to produce data that is just as accurate and useful as the data made by large models.

Why it matters?

This matters because it means people can get top-quality results from AI without needing super expensive hardware. It makes advanced AI tools more affordable and accessible for everyone, from students to small businesses.

Abstract

A collaborative framework using multiple small language models achieves high-quality data synthesis similar to large language models through a peer-review-inspired process.