AbGen: Evaluating Large Language Models in Ablation Study Design and Evaluation for Scientific Research

Yilun Zhao, Weiyuan Chen, Zhijian Xu, Manasi Patwardhan, Yixin Liu, Chengye Wang, Lovekesh Vig, Arman Cohan

2025-07-18

Summary

This paper talks about AbGen, a project that tests how well large language models (LLMs) can design and evaluate ablation studies, which are experiments where parts of a system are removed to see how important they are.

What's the problem?

The problem is that current large language models don't perform as well as human experts in designing these ablation studies for scientific research, and the automated methods used to check their work are often unreliable.

What's the solution?

The authors evaluated the abilities of LLMs in creating and assessing ablation studies, finding the gaps between AI and human performance and pointing out that current automated evaluations can't fully trust the LLMs' outputs.

Why it matters?

This matters because improving AI's ability to assist in scientific research experiments can speed up discovery and make research more efficient, but it also highlights the need to develop better evaluation methods to trust AI-generated results.

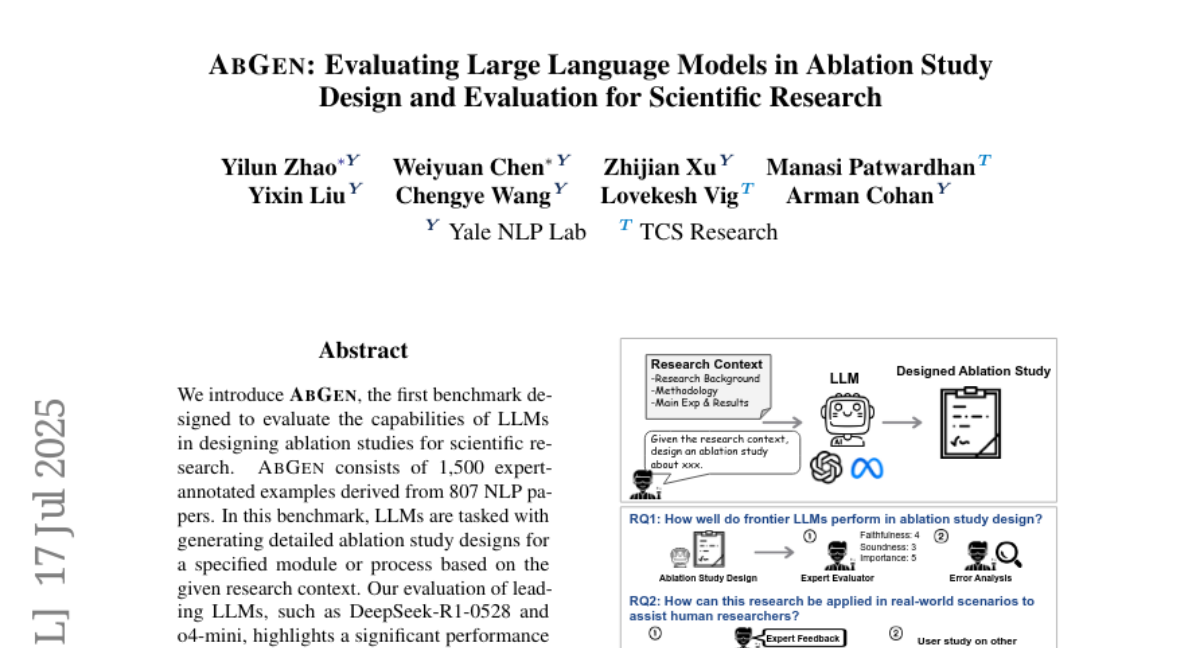

Abstract

AbGen evaluates LLMs in designing ablation studies for scientific research, revealing performance gaps compared to human experts and highlighting the unreliability of current automated evaluation methods.