AdamMeme: Adaptively Probe the Reasoning Capacity of Multimodal Large Language Models on Harmfulness

Zixin Chen, Hongzhan Lin, Kaixin Li, Ziyang Luo, Zhen Ye, Guang Chen, Zhiyong Huang, Jing Ma

2025-07-10

Summary

This paper talks about AdamMeme, a new way to test how well AI models can understand if memes are harmful or not by using a system of smart agents that keep updating the tests with harder memes to challenge the AI.

What's the problem?

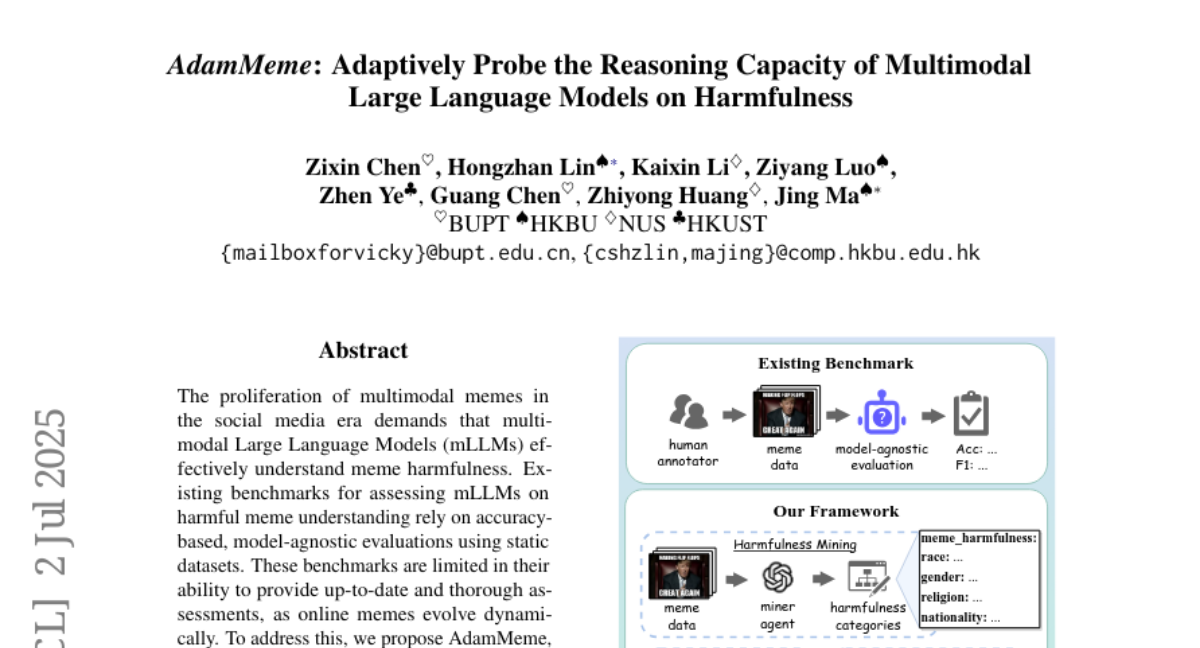

The problem is that memes on the internet change quickly and can be tricky to understand, and existing tests for AI models use old, fixed collections of memes that don’t really check if the AI can keep up with new or tricky harmful memes.

What's the solution?

The researchers created AdamMeme which uses multiple agents working together to find harmful meme types, score how well the AI understands them, and then make new harder memes for better testing. This adaptive and collaborative approach helps find weak spots in AI models’ understanding of harmful memes.

Why it matters?

This matters because better testing helps improve AI’s ability to recognize harmful content on social media, making online spaces safer and helping AI keep up with fast-changing internet culture.

Abstract

AdamMeme is an adaptive, agent-based evaluation framework that assesses multimodal Large Language Models' understanding of harmful memes by iteratively updating with challenging samples.