ADS-Edit: A Multimodal Knowledge Editing Dataset for Autonomous Driving Systems

Chenxi Wang, Jizhan Fang, Xiang Chen, Bozhong Tian, Ziwen Xu, Huajun Chen, Ningyu Zhang

2025-03-27

Summary

This paper is about improving AI for self-driving cars by teaching them new things without retraining the entire system.

What's the problem?

AI models for self-driving cars can struggle with unexpected situations, traffic rules, and different types of vehicles.

What's the solution?

The researchers created a new way to edit the knowledge of AI models for self-driving cars, allowing them to learn new information and handle complex situations without starting from scratch.

Why it matters?

This work matters because it can make self-driving cars safer and more reliable by allowing them to adapt to new situations and learn from their mistakes.

Abstract

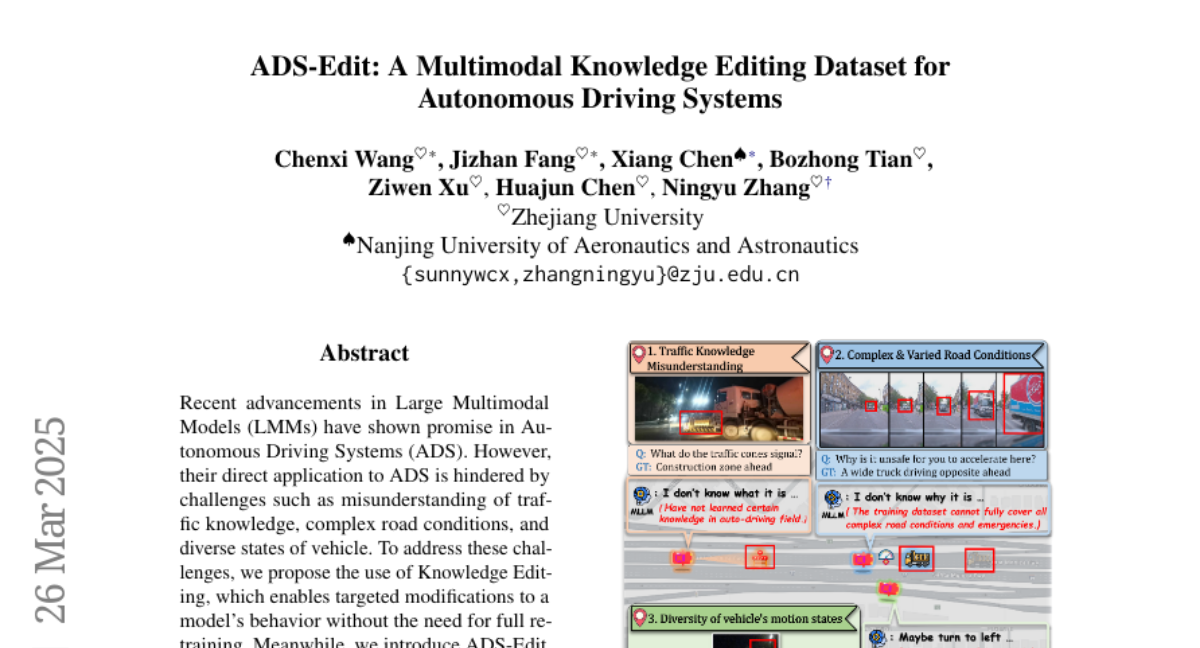

Recent advancements in Large Multimodal Models (LMMs) have shown promise in Autonomous Driving Systems (ADS). However, their direct application to ADS is hindered by challenges such as misunderstanding of traffic knowledge, complex road conditions, and diverse states of vehicle. To address these challenges, we propose the use of Knowledge Editing, which enables targeted modifications to a model's behavior without the need for full retraining. Meanwhile, we introduce ADS-Edit, a multimodal knowledge editing dataset specifically designed for ADS, which includes various real-world scenarios, multiple data types, and comprehensive evaluation metrics. We conduct comprehensive experiments and derive several interesting conclusions. We hope that our work will contribute to the further advancement of knowledge editing applications in the field of autonomous driving. Code and data are available in https://github.com/zjunlp/EasyEdit.