Advancing Multimodal Reasoning via Reinforcement Learning with Cold Start

Lai Wei, Yuting Li, Kaipeng Zheng, Chen Wang, Yue Wang, Linghe Kong, Lichao Sun, Weiran Huang

2025-05-29

Summary

This paper talks about a new method that helps AI models get better at understanding and reasoning with both text and images by using a mix of supervised learning and reinforcement learning.

What's the problem?

The problem is that large language models often struggle to connect information from different sources, like pictures and words, especially when they haven't been trained from the very beginning on both types of data. This makes it hard for them to perform well on tasks that require using multiple kinds of information together.

What's the solution?

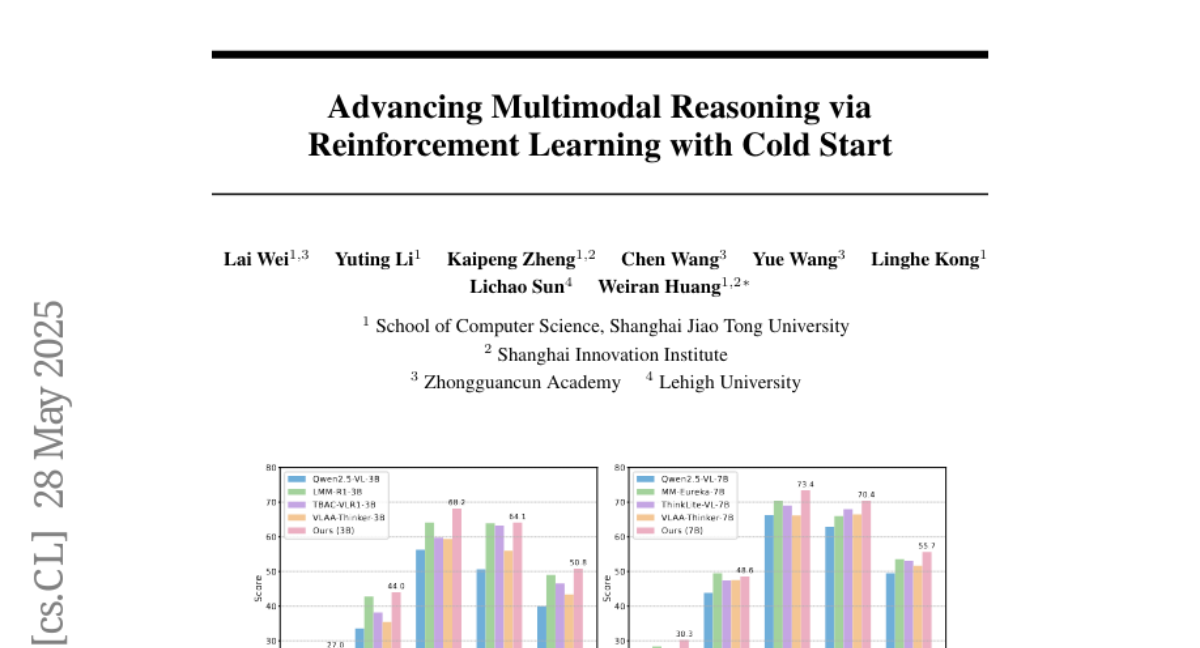

To solve this, the researchers used a two-stage approach. First, they fine-tuned the models using supervised learning, which means training with the right answers provided. Then, they used reinforcement learning, where the model learns by trial and error, to further improve how well the model reasons about both images and text. This combination helps the model become much better at multimodal reasoning, even if it started without much experience in this area.

Why it matters?

This is important because it leads to smarter AI that can handle more complex tasks, like answering questions about pictures or understanding diagrams, which is useful in education, research, and many real-world applications.

Abstract

A two-stage approach combining supervised fine-tuning and reinforcement learning enhances multimodal reasoning in large language models, achieving state-of-the-art performance on benchmarks.