Adversarial Data Collection: Human-Collaborative Perturbations for Efficient and Robust Robotic Imitation Learning

Siyuan Huang, Yue Liao, Siyuan Feng, Shu Jiang, Si Liu, Hongsheng Li, Maoqing Yao, Guanghui Ren

2025-03-17

Summary

This paper introduces Adversarial Data Collection (ADC), a new way to train robots that involves a human actively trying to disrupt the robot's learning process.

What's the problem?

Training robots to perform tasks often requires a lot of data, which can be expensive and time-consuming to collect in the real world. Simply recording demonstrations isn't always the most efficient way to learn.

What's the solution?

ADC uses a human-in-the-loop approach where the human acts as an 'adversary,' intentionally creating challenges for the robot to overcome during training. This forces the robot to learn how to recover from failures and adapt to changing conditions, making it more robust and efficient.

Why it matters?

This work matters because it offers a more efficient way to train robots, reducing the amount of data needed and improving their ability to handle real-world challenges. It also introduces a new dataset for robotics research.

Abstract

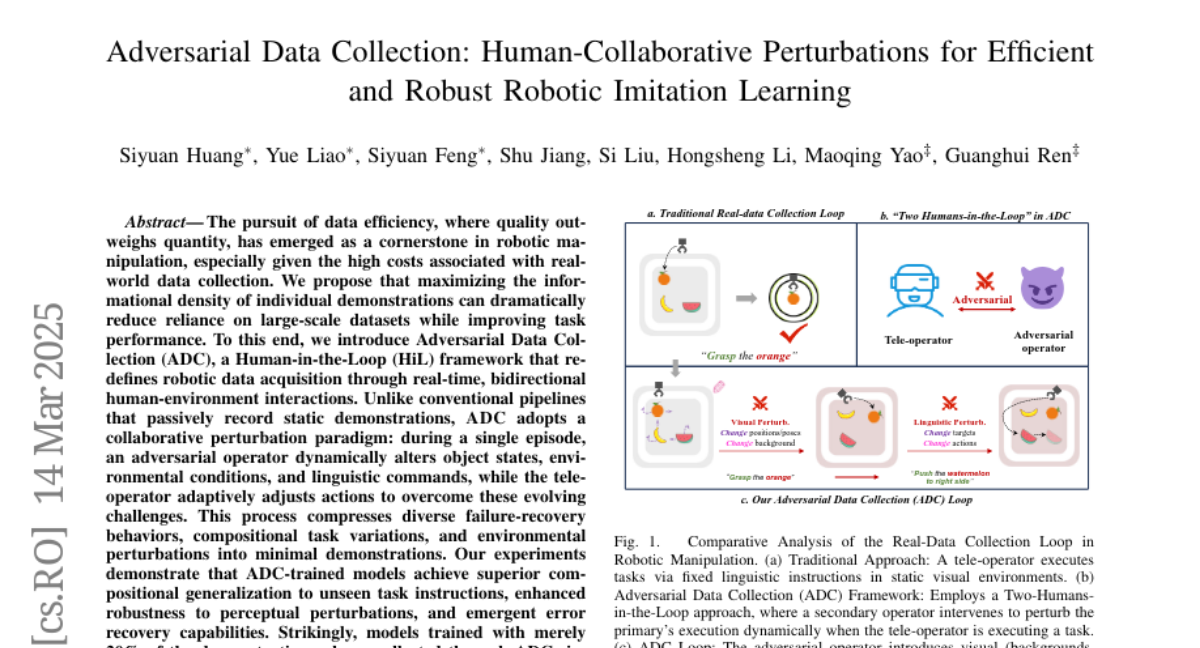

The pursuit of data efficiency, where quality outweighs quantity, has emerged as a cornerstone in robotic manipulation, especially given the high costs associated with real-world data collection. We propose that maximizing the informational density of individual demonstrations can dramatically reduce reliance on large-scale datasets while improving task performance. To this end, we introduce Adversarial Data Collection, a Human-in-the-Loop (HiL) framework that redefines robotic data acquisition through real-time, bidirectional human-environment interactions. Unlike conventional pipelines that passively record static demonstrations, ADC adopts a collaborative perturbation paradigm: during a single episode, an adversarial operator dynamically alters object states, environmental conditions, and linguistic commands, while the tele-operator adaptively adjusts actions to overcome these evolving challenges. This process compresses diverse failure-recovery behaviors, compositional task variations, and environmental perturbations into minimal demonstrations. Our experiments demonstrate that ADC-trained models achieve superior compositional generalization to unseen task instructions, enhanced robustness to perceptual perturbations, and emergent error recovery capabilities. Strikingly, models trained with merely 20% of the demonstration volume collected through ADC significantly outperform traditional approaches using full datasets. These advances bridge the gap between data-centric learning paradigms and practical robotic deployment, demonstrating that strategic data acquisition, not merely post-hoc processing, is critical for scalable, real-world robot learning. Additionally, we are curating a large-scale ADC-Robotics dataset comprising real-world manipulation tasks with adversarial perturbations. This benchmark will be open-sourced to facilitate advancements in robotic imitation learning.