AerialMegaDepth: Learning Aerial-Ground Reconstruction and View Synthesis

Khiem Vuong, Anurag Ghosh, Deva Ramanan, Srinivasa Narasimhan, Shubham Tulsiani

2025-04-21

Summary

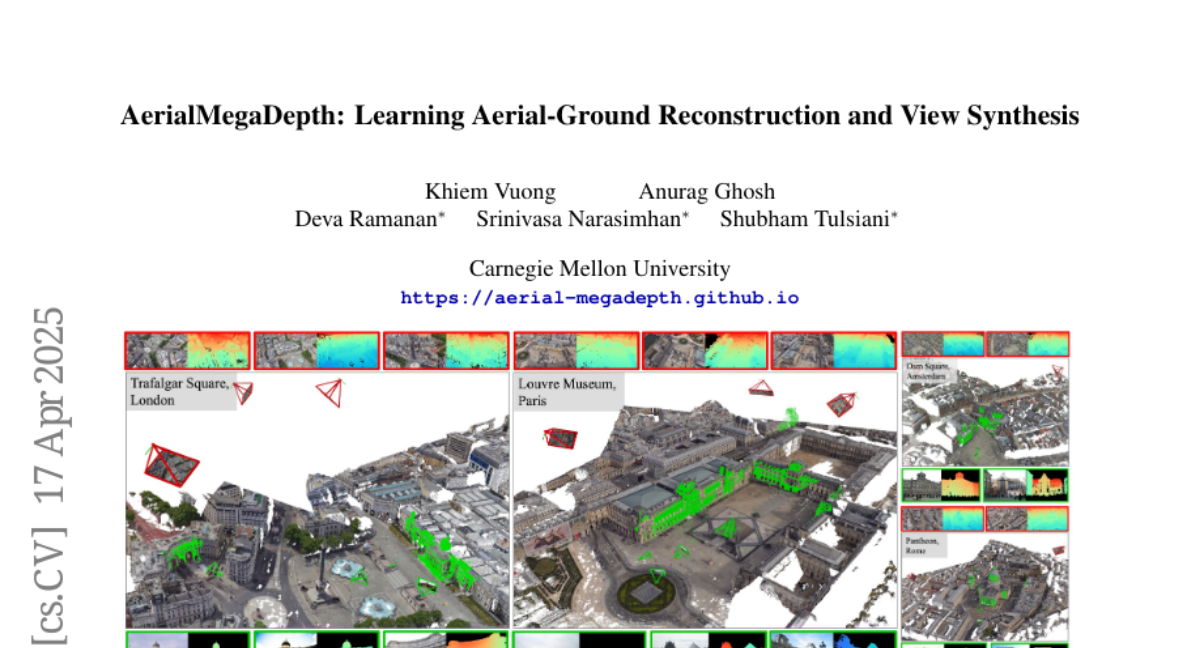

This paper talks about AerialMegaDepth, a new dataset that mixes computer-generated aerial images with real photos taken from the ground to help AI models create better 3D reconstructions and new viewpoints of scenes.

What's the problem?

The problem is that making accurate 3D models and generating new views of places is really hard, especially when you have to combine images taken from the air with those taken at ground level. Most existing datasets don’t have enough variety or the right kinds of images to train AI models to handle both perspectives well.

What's the solution?

The researchers built a large and diverse dataset by combining synthetic aerial images with real ground photos. This hybrid approach gives AI models the information they need to understand scenes from both above and on the ground, leading to better 3D reconstructions and more realistic new viewpoints.

Why it matters?

This matters because it helps improve technologies like virtual reality, mapping, and robotics, making it easier to create detailed models of the world that can be viewed from any angle.

Abstract

A scalable hybrid dataset combining pseudo-synthetic renderings and real ground-level images improves performance in geometric reconstruction and novel-view synthesis across aerial-ground viewpoints.