Analyzing LLMs' Knowledge Boundary Cognition Across Languages Through the Lens of Internal Representations

Chenghao Xiao, Hou Pong Chan, Hao Zhang, Mahani Aljunied, Lidong Bing, Noura Al Moubayed, Yu Rong

2025-04-21

Summary

This paper talks about how large language models (LLMs) understand the limits of what they know in different languages, and how this understanding is built into certain parts of the model’s brain-like structure.

What's the problem?

The problem is that LLMs sometimes have trouble recognizing when they don’t know something, especially when they’re working in languages other than English. This can lead to mistakes or made-up answers, which makes it harder to trust the model’s responses in multiple languages.

What's the solution?

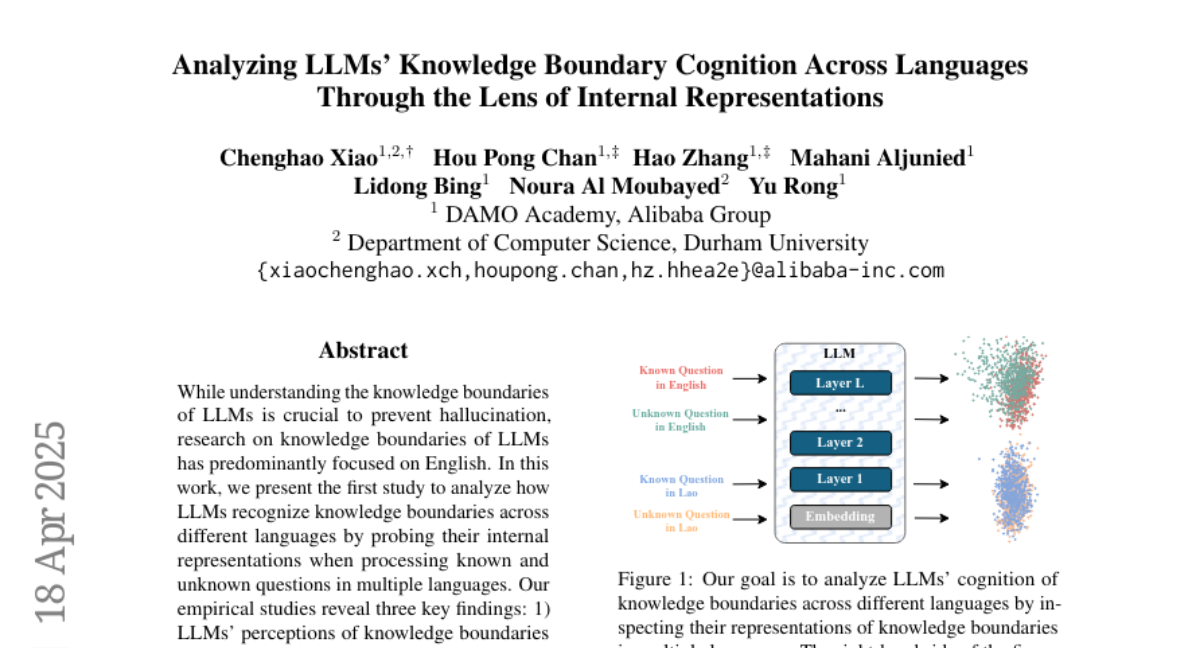

The researchers studied how these models represent their knowledge boundaries inside their layers and found that there are clear, predictable patterns that differ from one language to another. They then created a way to better align these patterns across languages without needing extra training, and also showed that fine-tuning the model with two languages makes it even better at knowing what it doesn’t know in both languages.

Why it matters?

This matters because it helps make language models more reliable and trustworthy when answering questions in different languages, which is important for global users and for making sure AI gives honest and accurate information no matter what language it’s using.

Abstract

LLMs' knowledge boundary perception is encoded in specific layers and exhibits linear differences across languages; a training-free alignment method and bilingual fine-tuning improve cross-lingual knowledge boundary recognition.