AniMaker: Automated Multi-Agent Animated Storytelling with MCTS-Driven Clip Generation

Haoyuan Shi, Yunxin Li, Xinyu Chen, Longyue Wang, Baotian Hu, Min Zhang

2025-06-15

Summary

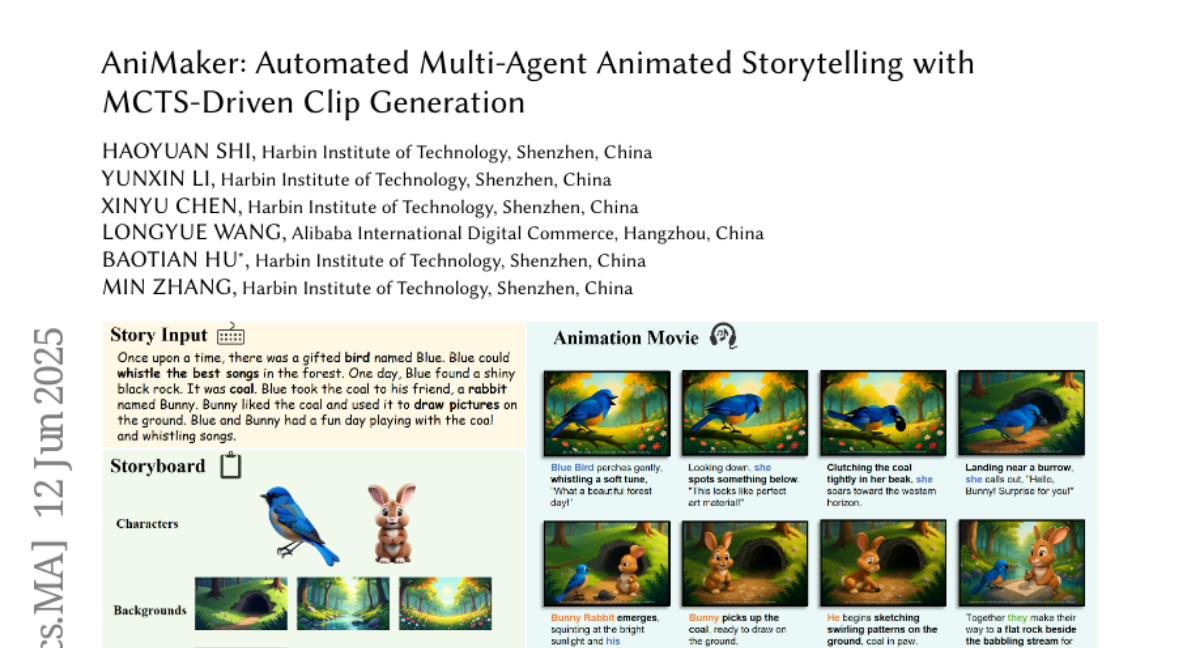

This paper talks about AniMaker, a smart system that uses multiple specialized agents working together to turn written stories into animated videos with multiple characters and scenes. It uses advanced techniques to make the videos look smooth and the story easy to follow, doing better than older systems.

What's the problem?

The problem is that making long animated videos with multiple scenes and characters from text is very hard. Current methods often produce disconnected videos that don't flow well, with some parts looking bad and hurting the whole animation.

What's the solution?

The solution was to create AniMaker, which includes several smart agents mimicking a professional animation team. It uses a special search method inspired by Monte Carlo Tree Search (MCTS) to efficiently generate many clip options and pick the best ones to keep the story consistent. It also uses a new evaluation method called AniEval to check how well each clip fits in the story before choosing it, making sure everything flows and looks good.

Why it matters?

This matters because it brings us closer to making high-quality animated story videos automatically from text, saving lots of time and effort while improving quality. It helps storytellers, educators, and creators make engaging videos easily without needing expert animation skills.

Abstract

AniMaker, a multi-agent framework using MCTS-Gen and AniEval, generates coherent storytelling videos from text input, outperforming existing models with better quality and efficiency.