AnimeGamer: Infinite Anime Life Simulation with Next Game State Prediction

Junhao Cheng, Yuying Ge, Yixiao Ge, Jing Liao, Ying Shan

2025-04-03

Summary

This paper talks about creating an interactive anime game where players can become their favorite characters and live out stories through language commands, using advanced AI to generate dynamic, ever-changing gameplay.

What's the problem?

Current methods for creating interactive anime games often produce inconsistent gameplay and lack the dynamic, moving elements that make games engaging. They also don't consider the visual history of the game, which can lead to confusing or contradictory scenes.

What's the solution?

The researchers developed AnimeGamer, a system that uses advanced AI models to understand both text and images. It can generate animated scenes that show character movements and changes in the game world. AnimeGamer keeps track of what has happened visually in the game so far, allowing it to create more consistent and logical gameplay. It also uses special techniques to create high-quality video clips that bring the anime world to life.

Why it matters?

This matters because it opens up new possibilities for interactive storytelling and gaming. It allows fans to immerse themselves in anime worlds in ways that weren't possible before, potentially changing how we think about both anime and video games. It also showcases how AI can be used to create more dynamic, responsive, and personalized entertainment experiences.

Abstract

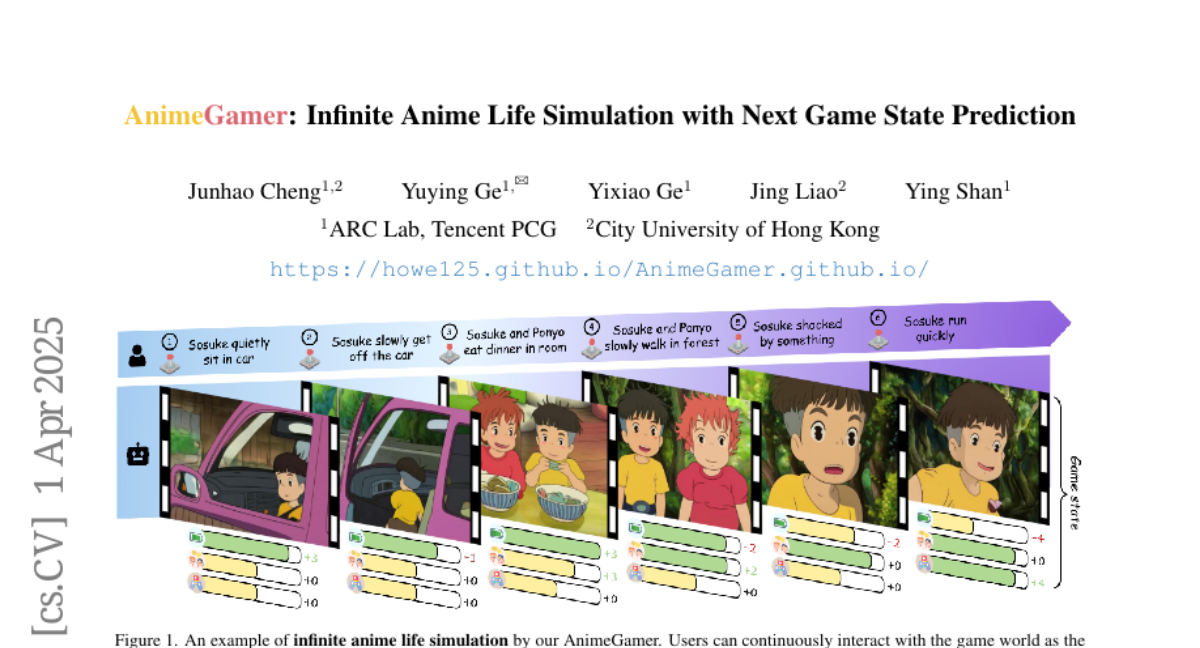

Recent advancements in image and video synthesis have opened up new promise in generative games. One particularly intriguing application is transforming characters from anime films into interactive, playable entities. This allows players to immerse themselves in the dynamic anime world as their favorite characters for life simulation through language instructions. Such games are defined as infinite game since they eliminate predetermined boundaries and fixed gameplay rules, where players can interact with the game world through open-ended language and experience ever-evolving storylines and environments. Recently, a pioneering approach for infinite anime life simulation employs large language models (LLMs) to translate multi-turn text dialogues into language instructions for image generation. However, it neglects historical visual context, leading to inconsistent gameplay. Furthermore, it only generates static images, failing to incorporate the dynamics necessary for an engaging gaming experience. In this work, we propose AnimeGamer, which is built upon Multimodal Large Language Models (MLLMs) to generate each game state, including dynamic animation shots that depict character movements and updates to character states, as illustrated in Figure 1. We introduce novel action-aware multimodal representations to represent animation shots, which can be decoded into high-quality video clips using a video diffusion model. By taking historical animation shot representations as context and predicting subsequent representations, AnimeGamer can generate games with contextual consistency and satisfactory dynamics. Extensive evaluations using both automated metrics and human evaluations demonstrate that AnimeGamer outperforms existing methods in various aspects of the gaming experience. Codes and checkpoints are available at https://github.com/TencentARC/AnimeGamer.