Any2Caption:Interpreting Any Condition to Caption for Controllable Video Generation

Shengqiong Wu, Weicai Ye, Jiahao Wang, Quande Liu, Xintao Wang, Pengfei Wan, Di Zhang, Kun Gai, Shuicheng Yan, Hao Fei, Tat-Seng Chua

2025-04-02

Summary

This paper introduces a new way to create videos where you can control what happens by using text, images, or other specific instructions.

What's the problem?

It's hard for current AI video generators to accurately understand what users want and create videos that match their vision.

What's the solution?

The researchers developed a system called Any2Caption that uses advanced AI to translate different types of instructions into detailed descriptions, which then guide the video generation process.

Why it matters?

This work matters because it can lead to more creative and user-friendly video creation tools.

Abstract

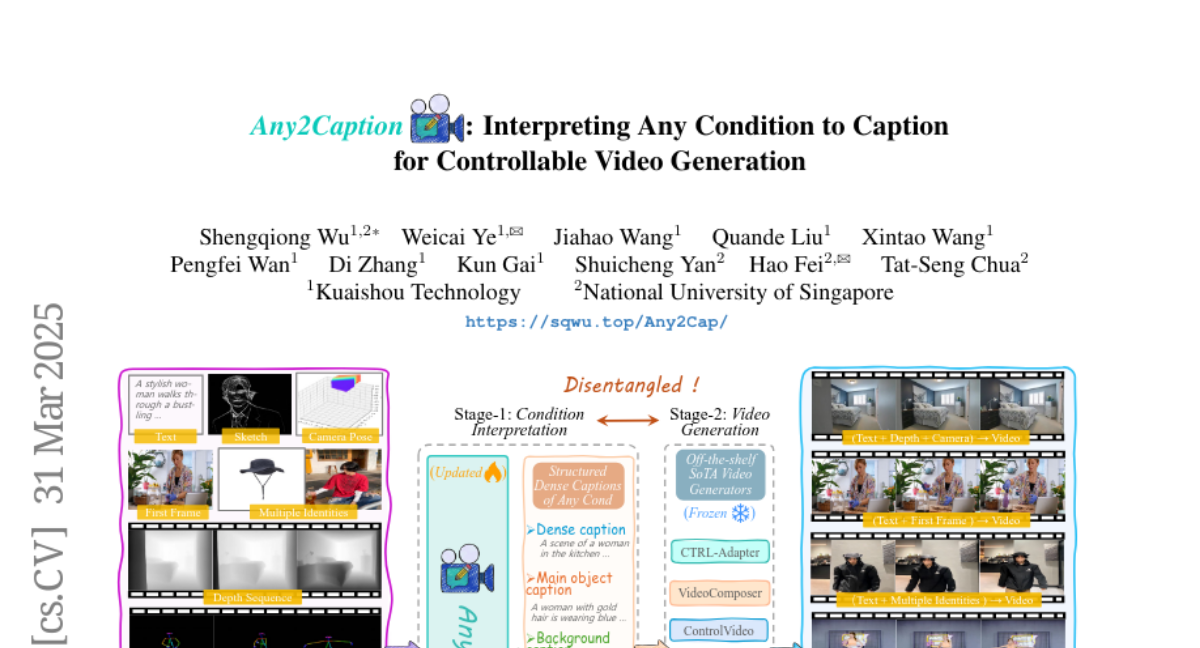

To address the bottleneck of accurate user intent interpretation within the current video generation community, we present Any2Caption, a novel framework for controllable video generation under any condition. The key idea is to decouple various condition interpretation steps from the video synthesis step. By leveraging modern multimodal large language models (MLLMs), Any2Caption interprets diverse inputs--text, images, videos, and specialized cues such as region, motion, and camera poses--into dense, structured captions that offer backbone video generators with better guidance. We also introduce Any2CapIns, a large-scale dataset with 337K instances and 407K conditions for any-condition-to-caption instruction tuning. Comprehensive evaluations demonstrate significant improvements of our system in controllability and video quality across various aspects of existing video generation models. Project Page: https://sqwu.top/Any2Cap/