any4: Learned 4-bit Numeric Representation for LLMs

Mostafa Elhoushi, Jeff Johnson

2025-07-09

Summary

This paper talks about any4, a new method that makes large language models smaller and faster by representing the numbers inside them using only 4 bits of data instead of the usual 16 bits.

What's the problem?

The problem is that large language models have billions of numbers called weights, which take up a lot of memory and make the models slow and expensive to run on computers.

What's the solution?

The researchers developed any4, which learns a custom mapping for each row of numbers to represent them accurately in 4 bits without needing extra preprocessing. This method uses a lookup table to quickly convert these small numbers back to their original values during computing, keeping the model accurate and efficient.

Why it matters?

This matters because any4 lets big AI models run faster and use less memory, making it easier to use them on less powerful devices and reducing costs, while still keeping their performance high.

Abstract

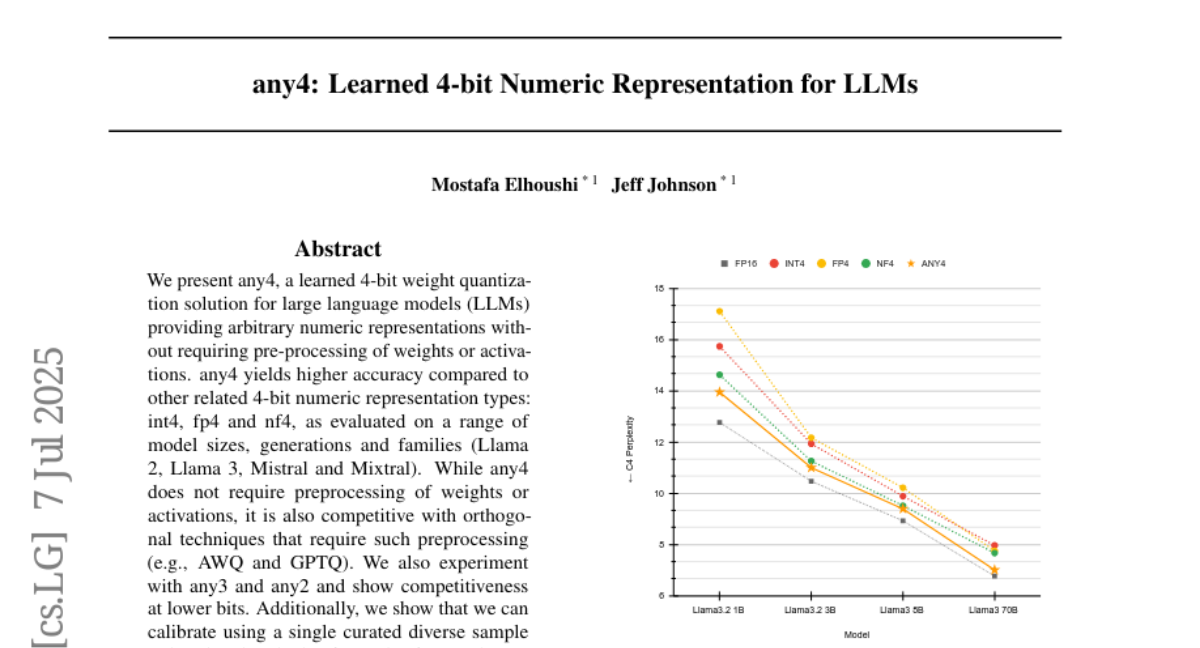

any4 is a learned 4-bit weight quantization method for large language models that achieves high accuracy without preprocessing and uses a GPU-efficient lookup table strategy.