Any6D: Model-free 6D Pose Estimation of Novel Objects

Taeyeop Lee, Bowen Wen, Minjun Kang, Gyuree Kang, In So Kweon, Kuk-Jin Yoon

2025-03-26

Summary

This paper is about creating a way for computers to figure out where objects are in 3D space, even if the computer has never seen those objects before.

What's the problem?

It's hard for computers to understand the position and size of objects they haven't been specifically trained to recognize, especially with limited information.

What's the solution?

The researchers developed a new method that only needs a single image to estimate the 3D position and size of an unknown object in a scene, even if there are obstacles or bad lighting.

Why it matters?

This work matters because it can improve robots' ability to interact with the real world, as well as help computers understand their surroundings better.

Abstract

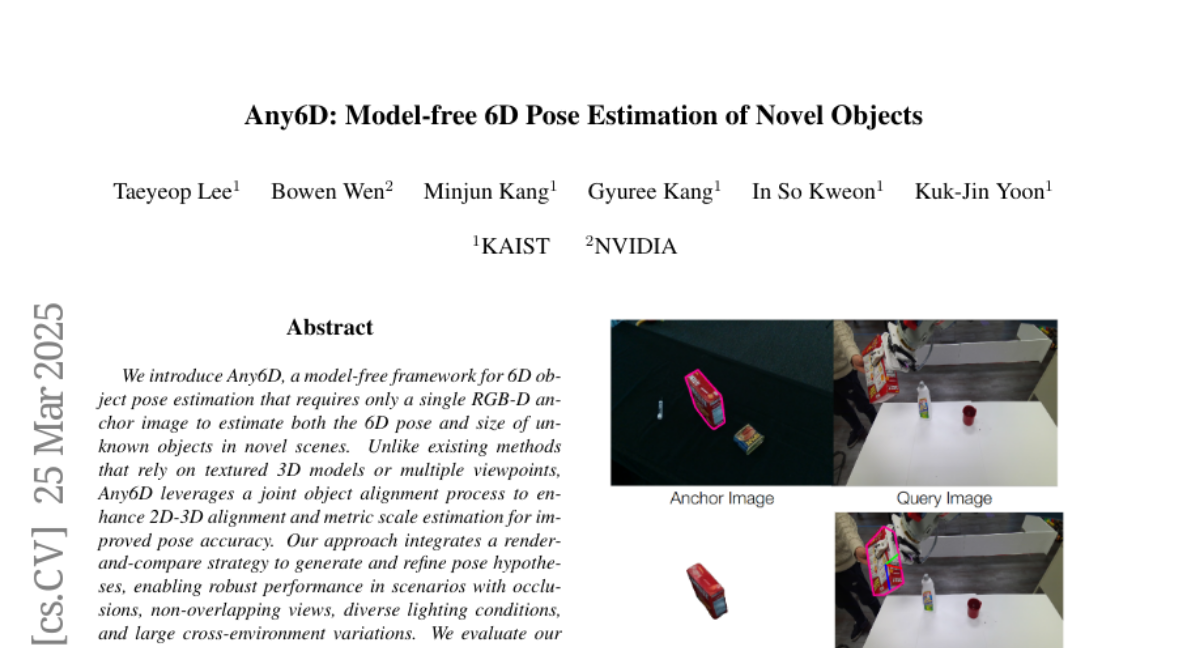

We introduce Any6D, a model-free framework for 6D object pose estimation that requires only a single RGB-D anchor image to estimate both the 6D pose and size of unknown objects in novel scenes. Unlike existing methods that rely on textured 3D models or multiple viewpoints, Any6D leverages a joint object alignment process to enhance 2D-3D alignment and metric scale estimation for improved pose accuracy. Our approach integrates a render-and-compare strategy to generate and refine pose hypotheses, enabling robust performance in scenarios with occlusions, non-overlapping views, diverse lighting conditions, and large cross-environment variations. We evaluate our method on five challenging datasets: REAL275, Toyota-Light, HO3D, YCBINEOAT, and LM-O, demonstrating its effectiveness in significantly outperforming state-of-the-art methods for novel object pose estimation. Project page: https://taeyeop.com/any6d