Are We Done with Object-Centric Learning?

Alexander Rubinstein, Ameya Prabhu, Matthias Bethge, Seong Joon Oh

2025-04-10

Summary

This paper talks about whether we’ve solved the problem of teaching AI to focus on individual objects in images, like separating a cat from its surroundings, and how well this helps AI handle new, unfamiliar situations.

What's the problem?

While AI can now separate objects in images pretty well using modern tools, it’s unclear if this actually helps AI generalize better to new scenarios, like recognizing objects in different backgrounds or lighting.

What's the solution?

The paper tests a new method (OCCAM) that uses existing object-separation tools to mask out backgrounds and focus AI on just the objects, showing this works better than older methods for handling unfamiliar situations.

Why it matters?

This helps improve AI systems that need to work reliably in real-world settings, like self-driving cars or medical imaging, where objects might appear in unexpected ways, and pushes research toward understanding how humans perceive objects.

Abstract

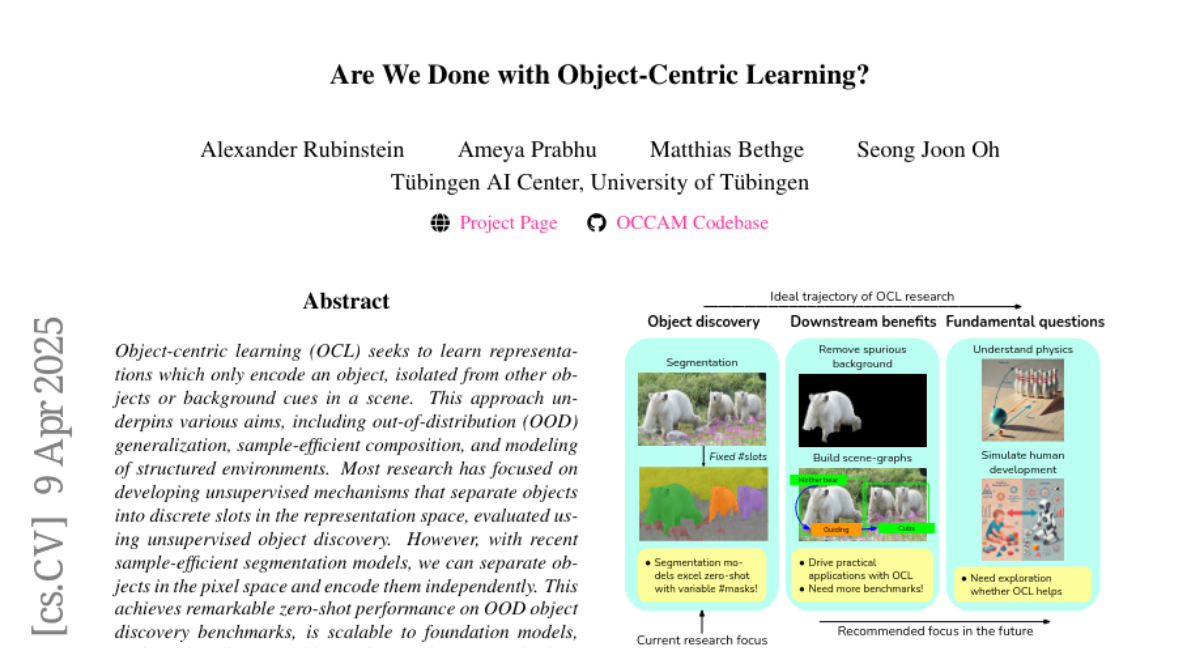

Object-centric learning (OCL) seeks to learn representations that only encode an object, isolated from other objects or background cues in a scene. This approach underpins various aims, including out-of-distribution (OOD) generalization, sample-efficient composition, and modeling of structured environments. Most research has focused on developing unsupervised mechanisms that separate objects into discrete slots in the representation space, evaluated using unsupervised object discovery. However, with recent sample-efficient segmentation models, we can separate objects in the pixel space and encode them independently. This achieves remarkable zero-shot performance on OOD object discovery benchmarks, is scalable to foundation models, and can handle a variable number of slots out-of-the-box. Hence, the goal of OCL methods to obtain object-centric representations has been largely achieved. Despite this progress, a key question remains: How does the ability to separate objects within a scene contribute to broader OCL objectives, such as OOD generalization? We address this by investigating the OOD generalization challenge caused by spurious background cues through the lens of OCL. We propose a novel, training-free probe called Object-Centric Classification with Applied Masks (OCCAM), demonstrating that segmentation-based encoding of individual objects significantly outperforms slot-based OCL methods. However, challenges in real-world applications remain. We provide the toolbox for the OCL community to use scalable object-centric representations, and focus on practical applications and fundamental questions, such as understanding object perception in human cognition. Our code is available https://github.com/AlexanderRubinstein/OCCAM{here}.