ARIG: Autoregressive Interactive Head Generation for Real-time Conversations

Ying Guo, Xi Liu, Cheng Zhen, Pengfei Yan, Xiaoming Wei

2025-07-03

Summary

This paper talks about ARIG, a new AI system that can generate realistic head movements and facial expressions during real-time conversations. It helps virtual avatars respond with natural and smooth motions that match what is being said and the flow of the conversation.

What's the problem?

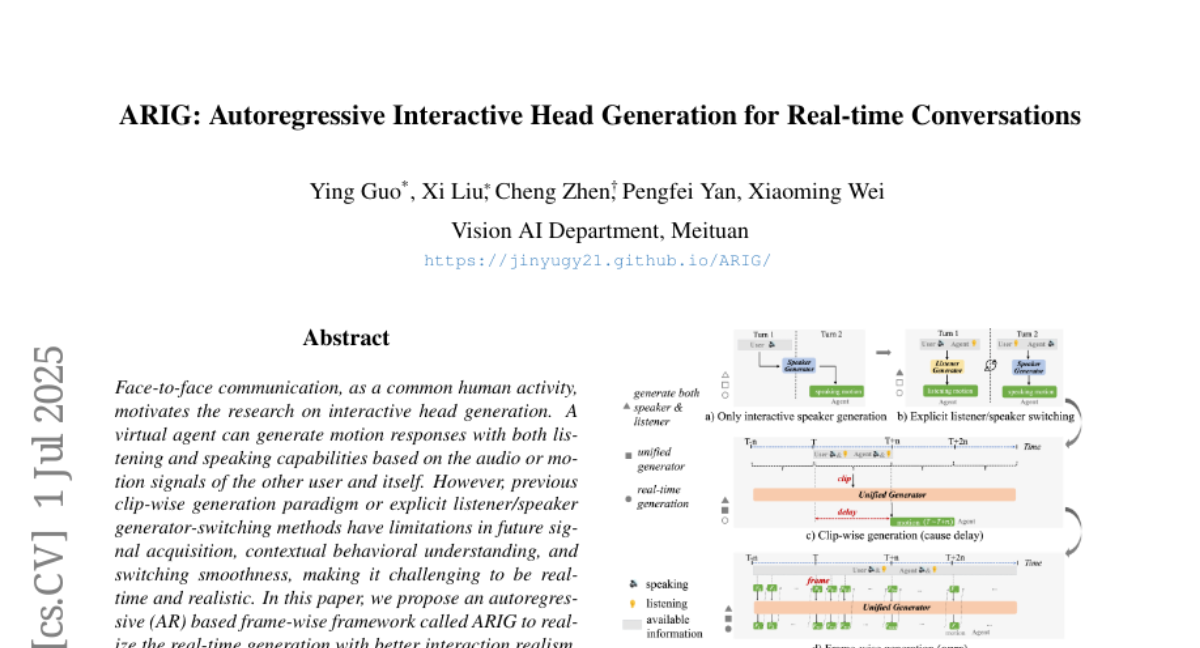

The problem is that previous methods for generating talking head animations often create stiff or unnatural movements, have trouble switching between speaking and listening roles smoothly, and can't react quickly in live conversations.

What's the solution?

The researchers designed ARIG using an autoregressive approach, which generates each frame of head movement based on the previous ones, allowing real-time updates. They included modules that understand both short-term and long-term conversational behaviors by analyzing audio and visual signals from both speakers, and they also detect different conversation states like interruptions or pauses to guide the animation more accurately.

Why it matters?

This matters because it helps create more lifelike and engaging virtual characters for video calls, games, and digital assistants, making interactions feel more natural and human-like, which can improve communication and user experience.

Abstract

An autoregressive framework for real-time interactive head generation improves interaction realism through bidirectional learning and detailed conversational state understanding.