Articulated Kinematics Distillation from Video Diffusion Models

Xuan Li, Qianli Ma, Tsung-Yi Lin, Yongxin Chen, Chenfanfu Jiang, Ming-Yu Liu, Donglai Xiang

2025-04-03

Summary

This paper is about creating better AI models for animating characters in videos, combining the strengths of traditional animation techniques with modern AI.

What's the problem?

Creating realistic and controllable character animations is difficult, especially when trying to maintain the character's shape and allow for physically realistic movements.

What's the solution?

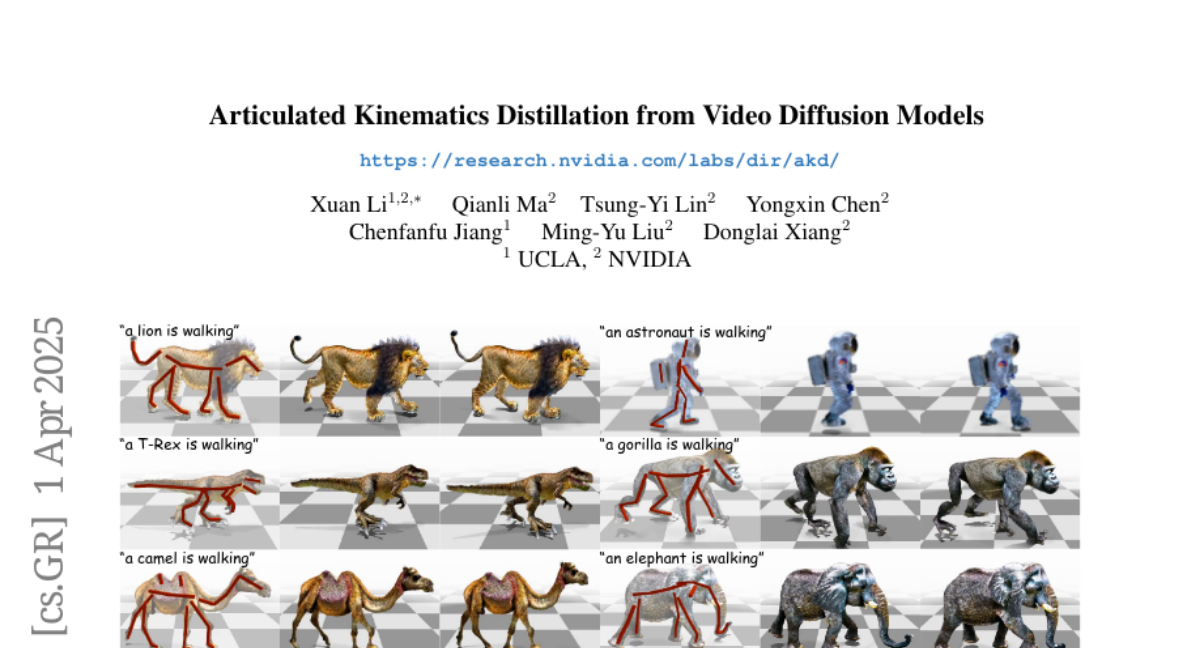

The researchers developed a system called Articulated Kinematics Distillation (AKD) that uses a skeleton-based representation for characters and combines it with AI models to generate realistic and controllable animations.

Why it matters?

This work matters because it can lead to more realistic and engaging character animations in video games, movies, and other applications.

Abstract

We present Articulated Kinematics Distillation (AKD), a framework for generating high-fidelity character animations by merging the strengths of skeleton-based animation and modern generative models. AKD uses a skeleton-based representation for rigged 3D assets, drastically reducing the Degrees of Freedom (DoFs) by focusing on joint-level control, which allows for efficient, consistent motion synthesis. Through Score Distillation Sampling (SDS) with pre-trained video diffusion models, AKD distills complex, articulated motions while maintaining structural integrity, overcoming challenges faced by 4D neural deformation fields in preserving shape consistency. This approach is naturally compatible with physics-based simulation, ensuring physically plausible interactions. Experiments show that AKD achieves superior 3D consistency and motion quality compared with existing works on text-to-4D generation. Project page: https://research.nvidia.com/labs/dir/akd/