Attention IoU: Examining Biases in CelebA using Attention Maps

Aaron Serianni, Tyler Zhu, Olga Russakovsky, Vikram V. Ramaswamy

2025-03-27

Summary

This paper uses attention maps to find biases in computer vision models trained on the CelebA dataset.

What's the problem?

Computer vision models can learn and amplify biases in training data, but it's hard to see how these biases affect the model's internal workings.

What's the solution?

The researchers developed a new metric called Attention-IoU that uses attention maps to show which image features the model focuses on, revealing potential biases.

Why it matters?

This work matters because it provides a way to understand and address biases in AI models, making them fairer and more reliable.

Abstract

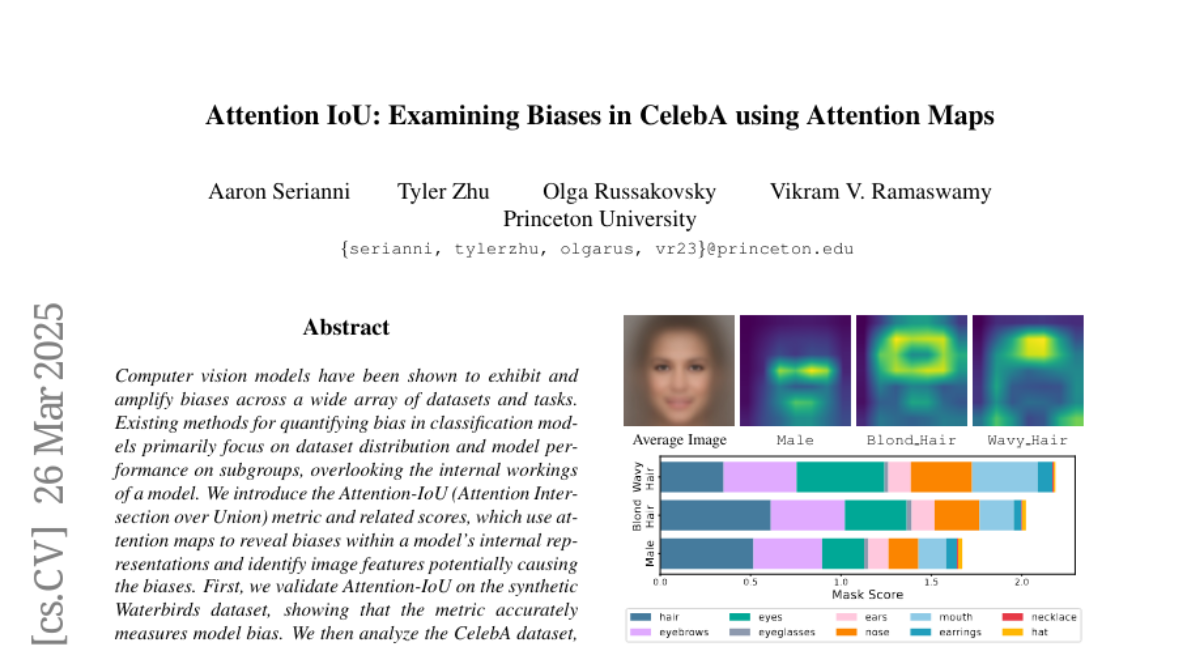

Computer vision models have been shown to exhibit and amplify biases across a wide array of datasets and tasks. Existing methods for quantifying bias in classification models primarily focus on dataset distribution and model performance on subgroups, overlooking the internal workings of a model. We introduce the Attention-IoU (Attention Intersection over Union) metric and related scores, which use attention maps to reveal biases within a model's internal representations and identify image features potentially causing the biases. First, we validate Attention-IoU on the synthetic Waterbirds dataset, showing that the metric accurately measures model bias. We then analyze the CelebA dataset, finding that Attention-IoU uncovers correlations beyond accuracy disparities. Through an investigation of individual attributes through the protected attribute of Male, we examine the distinct ways biases are represented in CelebA. Lastly, by subsampling the training set to change attribute correlations, we demonstrate that Attention-IoU reveals potential confounding variables not present in dataset labels.