Attention, Please! Revisiting Attentive Probing for Masked Image Modeling

Bill Psomas, Dionysis Christopoulos, Eirini Baltzi, Ioannis Kakogeorgiou, Tilemachos Aravanis, Nikos Komodakis, Konstantinos Karantzalos, Yannis Avrithis, Giorgos Tolias

2025-06-15

Summary

This paper talks about a new way to check how AI models pay attention when learning from images by using a method called multi-query cross-attention. This method helps the AI focus on the important parts of the image more efficiently, making learning better while using fewer resources.

What's the problem?

The problem is that traditional ways of testing attention in AI models for images use a lot of computing power and involve many parameters, which makes them slow and expensive. This makes it hard to improve or use these models on big tasks or devices with limited capabilities.

What's the solution?

The solution was to use a multi-query cross-attention mechanism, which reduces the number of parameters and computation needed by sharing keys and values among different attention heads while keeping multiple queries. This approach makes the attention probing process faster and more efficient without losing performance, improving how well the AI learns from images.

Why it matters?

This matters because making the attention mechanism in AI models more efficient helps develop faster and cheaper self-supervised learning systems for images. This can lead to better AI tools for understanding and generating images, even when computing resources are limited, benefiting many applications like computer vision and image recognition.

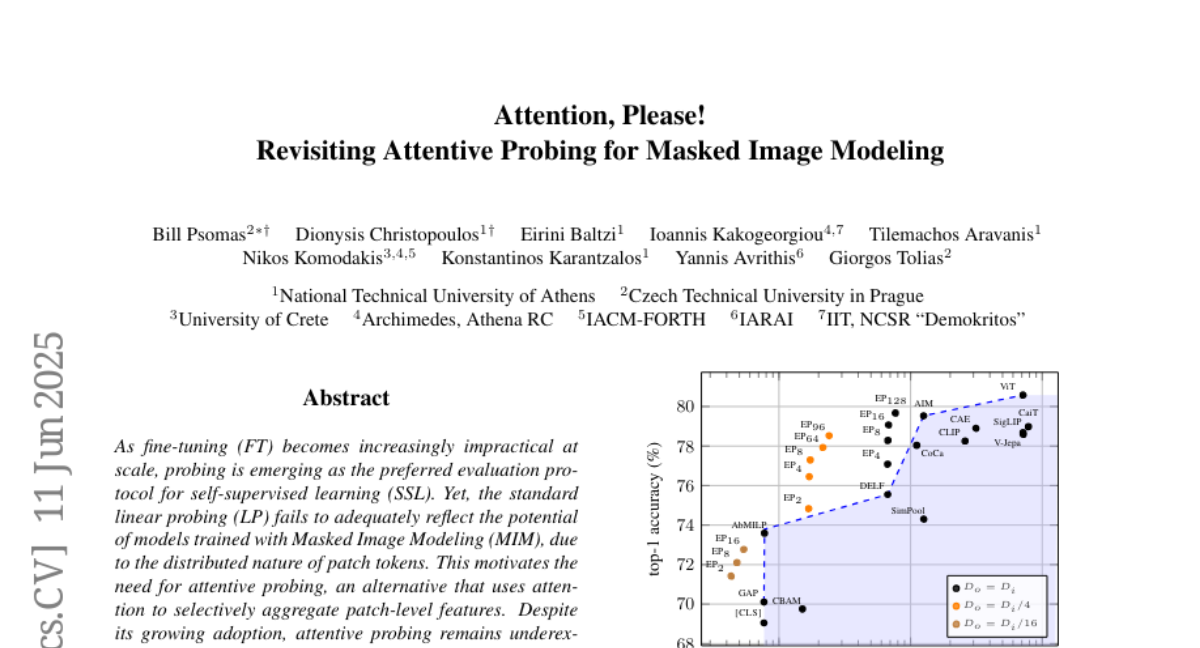

Abstract

Efficient probing using a multi-query cross-attention mechanism enhances performance in self-supervised learning while reducing parameterization and computational cost compared to traditional methods.