Audio Flamingo 2: An Audio-Language Model with Long-Audio Understanding and Expert Reasoning Abilities

Sreyan Ghosh, Zhifeng Kong, Sonal Kumar, S Sakshi, Jaehyeon Kim, Wei Ping, Rafael Valle, Dinesh Manocha, Bryan Catanzaro

2025-03-07

Summary

This paper talks about Audio Flamingo 2 (AF2), a new AI system that can understand and reason about different types of sounds, including music and non-speech noises, for long periods of time

What's the problem?

Current AI systems struggle to understand and reason about non-speech sounds and music, especially for longer audio clips. This limits how well AI can interact with and understand the world around it

What's the solution?

The researchers created AF2, which uses a special model called CLAP, artificial question-answering data, and a step-by-step learning process. They also made a new dataset called LongAudio for training AI on longer audio clips, and a test called LongAudioBench to see how well AI understands these longer sounds

Why it matters?

This matters because it helps AI understand the world better through sound, not just speech. It could lead to smarter virtual assistants, better music analysis tools, or even help robots navigate using sound. By working with longer audio clips, it brings AI closer to how humans naturally listen to and understand their environment

Abstract

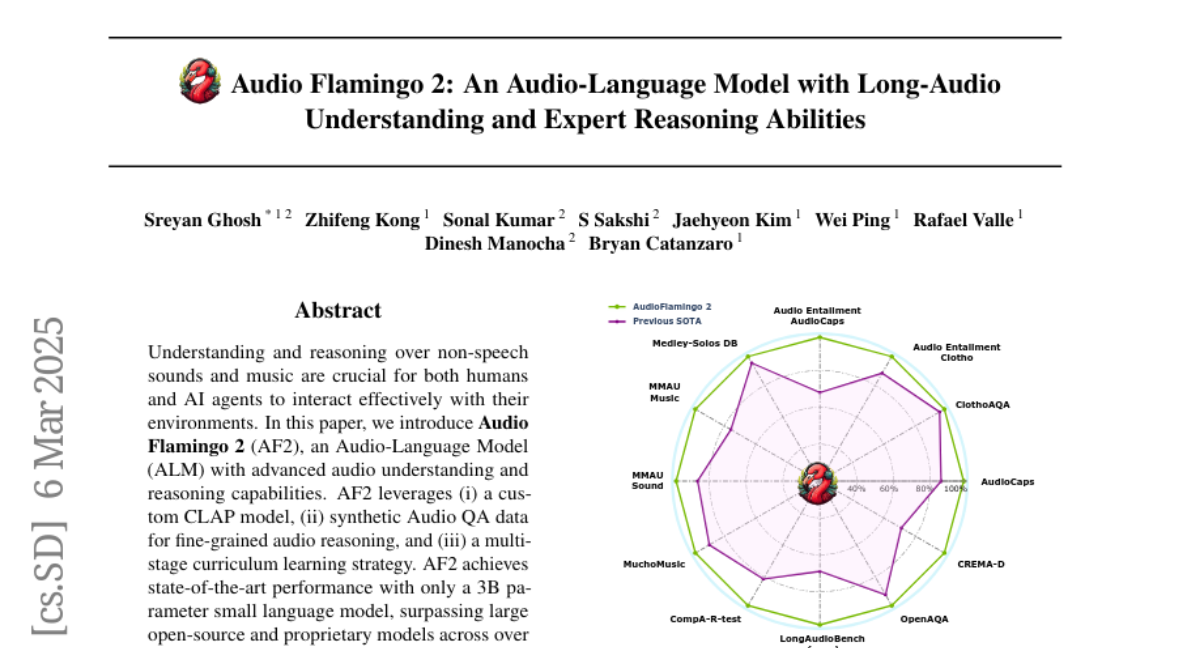

Understanding and reasoning over non-speech sounds and music are crucial for both humans and AI agents to interact effectively with their environments. In this paper, we introduce Audio Flamingo 2 (AF2), an Audio-Language Model (ALM) with advanced audio understanding and reasoning capabilities. AF2 leverages (i) a custom CLAP model, (ii) synthetic Audio QA data for fine-grained audio reasoning, and (iii) a multi-stage curriculum learning strategy. AF2 achieves state-of-the-art performance with only a 3B parameter small language model, surpassing large open-source and proprietary models across over 20 benchmarks. Next, for the first time, we extend audio understanding to long audio segments (30 secs to 5 mins) and propose LongAudio, a large and novel dataset for training ALMs on long audio captioning and question-answering tasks. Fine-tuning AF2 on LongAudio leads to exceptional performance on our proposed <PRE_TAG>LongAudioBench</POST_TAG>, an expert annotated benchmark for evaluating ALMs on long audio understanding capabilities. We conduct extensive ablation studies to confirm the efficacy of our approach. Project Website: https://research.nvidia.com/labs/adlr/AF2/.