Audio-visual Controlled Video Diffusion with Masked Selective State Spaces Modeling for Natural Talking Head Generation

Fa-Ting Hong, Zunnan Xu, Zixiang Zhou, Jun Zhou, Xiu Li, Qin Lin, Qinglin Lu, Dan Xu

2025-04-04

Summary

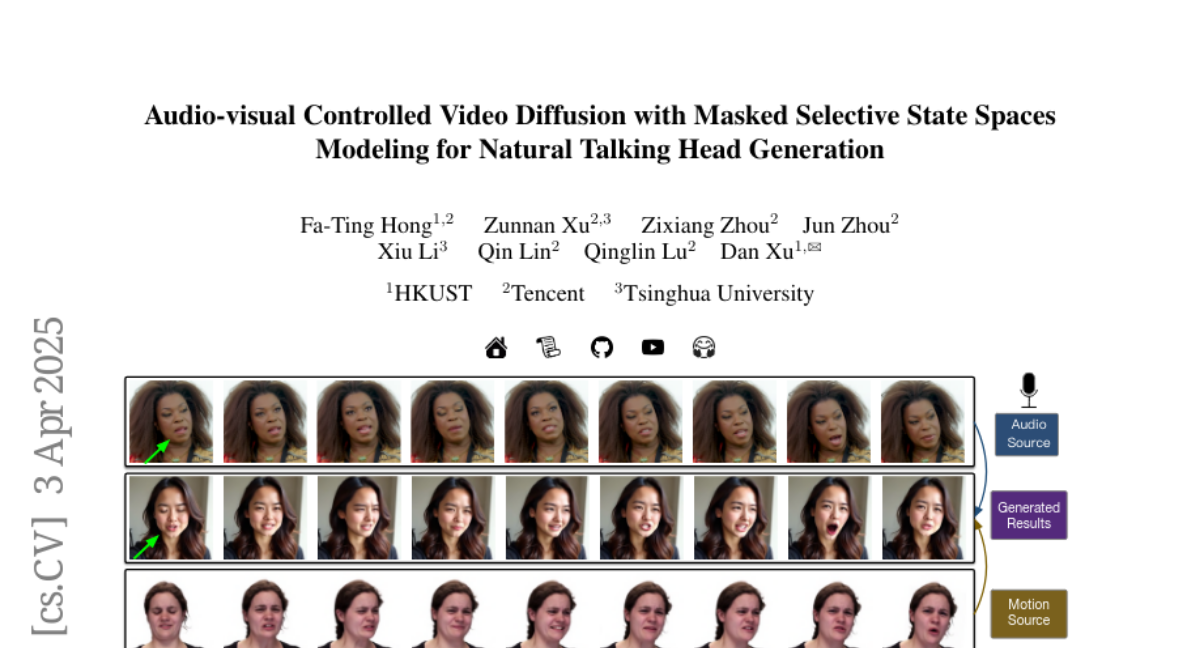

This paper is about creating a better way to generate realistic videos of people talking, where you can control their facial expressions using different signals, like audio or text.

What's the problem?

Existing methods for creating talking head videos are limited because they can only be controlled by one signal at a time, making it hard to create natural and expressive videos.

What's the solution?

The researchers developed a new system that can use multiple signals to control different parts of the face, allowing for more realistic and expressive talking head videos. It also uses a special structure that ensures the different signals don't interfere with each other.

Why it matters?

This work matters because it can lead to more realistic and controllable virtual avatars, which could be used in video games, virtual reality, and other applications.

Abstract

Talking head synthesis is vital for virtual avatars and human-computer interaction. However, most existing methods are typically limited to accepting control from a single primary modality, restricting their practical utility. To this end, we introduce ACTalker, an end-to-end video diffusion framework that supports both multi-signals control and single-signal control for talking head video generation. For multiple control, we design a parallel mamba structure with multiple branches, each utilizing a separate driving signal to control specific facial regions. A gate mechanism is applied across all branches, providing flexible control over video generation. To ensure natural coordination of the controlled video both temporally and spatially, we employ the mamba structure, which enables driving signals to manipulate feature tokens across both dimensions in each branch. Additionally, we introduce a mask-drop strategy that allows each driving signal to independently control its corresponding facial region within the mamba structure, preventing control conflicts. Experimental results demonstrate that our method produces natural-looking facial videos driven by diverse signals and that the mamba layer seamlessly integrates multiple driving modalities without conflict.