AudioX: Diffusion Transformer for Anything-to-Audio Generation

Zeyue Tian, Yizhu Jin, Zhaoyang Liu, Ruibin Yuan, Xu Tan, Qifeng Chen, Wei Xue, Yike Guo

2025-03-19

Summary

This paper is about AudioX, a new AI model that can create audio from all sorts of inputs, like text, images, and videos.

What's the problem?

Existing AI models for creating audio are limited. They often only work with one type of input, need lots of specific training data, and struggle to combine different types of information.

What's the solution?

AudioX uses a special design called a Diffusion Transformer that allows it to create both general audio and music from various inputs. It also uses a method that allows it to learn from incomplete information, making it more flexible.

Why it matters?

This work is important because it creates a single AI model that can handle many different audio creation tasks, making it easier to generate high-quality audio for various applications.

Abstract

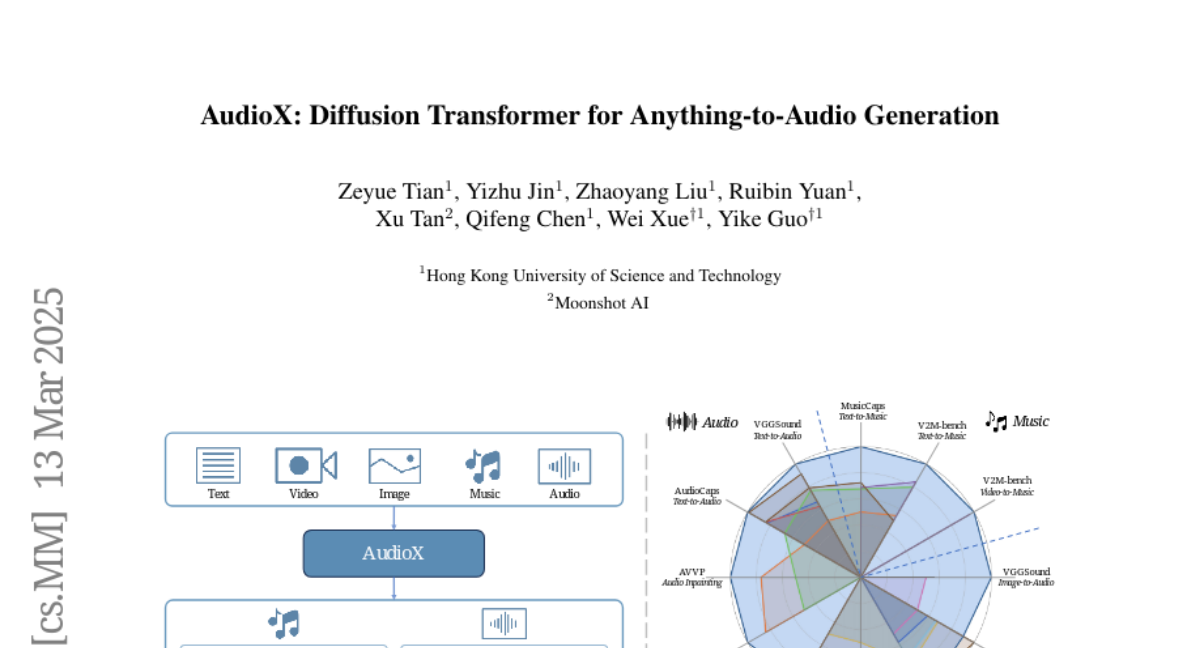

Audio and music generation have emerged as crucial tasks in many applications, yet existing approaches face significant limitations: they operate in isolation without unified capabilities across modalities, suffer from scarce high-quality, multi-modal training data, and struggle to effectively integrate diverse inputs. In this work, we propose AudioX, a unified Diffusion Transformer model for Anything-to-Audio and Music Generation. Unlike previous domain-specific models, AudioX can generate both general audio and music with high quality, while offering flexible natural language control and seamless processing of various modalities including text, video, image, music, and audio. Its key innovation is a multi-modal masked training strategy that masks inputs across modalities and forces the model to learn from masked inputs, yielding robust and unified cross-modal representations. To address data scarcity, we curate two comprehensive datasets: vggsound-caps with 190K audio captions based on the VGGSound dataset, and V2M-caps with 6 million music captions derived from the V2M dataset. Extensive experiments demonstrate that AudioX not only matches or outperforms state-of-the-art specialized models, but also offers remarkable versatility in handling diverse input modalities and generation tasks within a unified architecture. The code and datasets will be available at https://zeyuet.github.io/AudioX/