AuraFusion360: Augmented Unseen Region Alignment for Reference-based 360° Unbounded Scene Inpainting

Chung-Ho Wu, Yang-Jung Chen, Ying-Huan Chen, Jie-Ying Lee, Bo-Hsu Ke, Chun-Wei Tuan Mu, Yi-Chuan Huang, Chin-Yang Lin, Min-Hung Chen, Yen-Yu Lin, Yu-Lun Liu

2025-02-10

Summary

This paper talks about AuraFusion360, a new method for fixing missing or removed parts in 360-degree 3D scenes, like those used in virtual reality or architectural designs. It uses advanced techniques to ensure the edits look realistic from all angles and maintain geometric accuracy.

What's the problem?

Existing methods for editing 360-degree 3D scenes often struggle to keep the changes consistent when viewed from different perspectives. They also have trouble accurately filling gaps or removing objects without creating visual errors or breaking the scene's structure.

What's the solution?

AuraFusion360 introduces three innovations: depth-aware unseen mask generation to identify hidden areas needing repair, Adaptive Guided Depth Diffusion to place new 3D points correctly without extra training, and SDEdit-based detail enhancement to make the edits look smooth and coherent across multiple views. The researchers also created a new dataset, 360-USID, to test and improve their method.

Why it matters?

This matters because high-quality editing of 3D scenes is essential for applications like virtual reality, gaming, and architecture. Better tools like AuraFusion360 make these environments more immersive and realistic while saving time and effort for creators.

Abstract

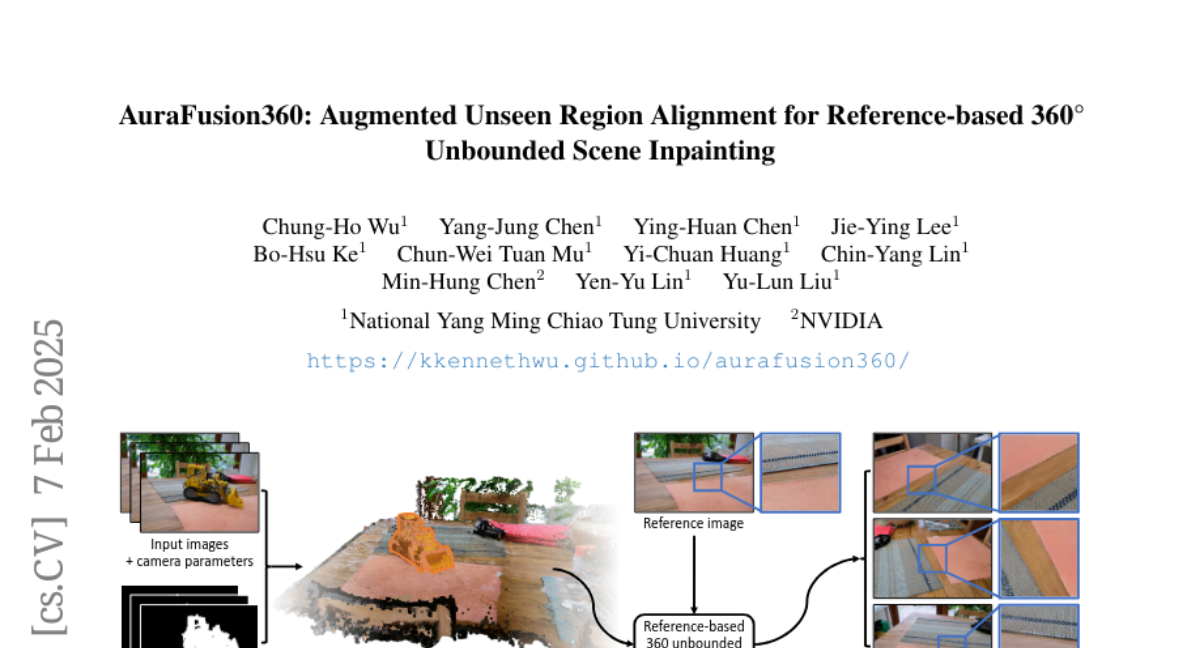

Three-dimensional scene inpainting is crucial for applications from virtual reality to architectural visualization, yet existing methods struggle with view consistency and geometric accuracy in 360{\deg} unbounded scenes. We present AuraFusion360, a novel reference-based method that enables high-quality object removal and hole filling in 3D scenes represented by Gaussian Splatting. Our approach introduces (1) depth-aware unseen mask generation for accurate occlusion identification, (2) Adaptive Guided Depth Diffusion, a zero-shot method for accurate initial point placement without requiring additional training, and (3) SDEdit-based detail enhancement for multi-view coherence. We also introduce 360-USID, the first comprehensive dataset for 360{\deg} unbounded scene inpainting with ground truth. Extensive experiments demonstrate that AuraFusion360 significantly outperforms existing methods, achieving superior perceptual quality while maintaining geometric accuracy across dramatic viewpoint changes. See our project page for video results and the dataset at https://kkennethwu.github.io/aurafusion360/.