Automating Steering for Safe Multimodal Large Language Models

Lyucheng Wu, Mengru Wang, Ziwen Xu, Tri Cao, Nay Oo, Bryan Hooi, Shumin Deng

2025-07-18

Summary

This paper talks about AutoSteer, a new technology that helps multimodal large language models (which understand both text and images or other types of data) stay safe by preventing attacks and harmful outputs without needing extra training.

What's the problem?

The problem is that large multimodal models can be vulnerable to various attacks that make them produce unsafe or wrong responses, and fixing these usually requires retraining the whole model, which is expensive and slow.

What's the solution?

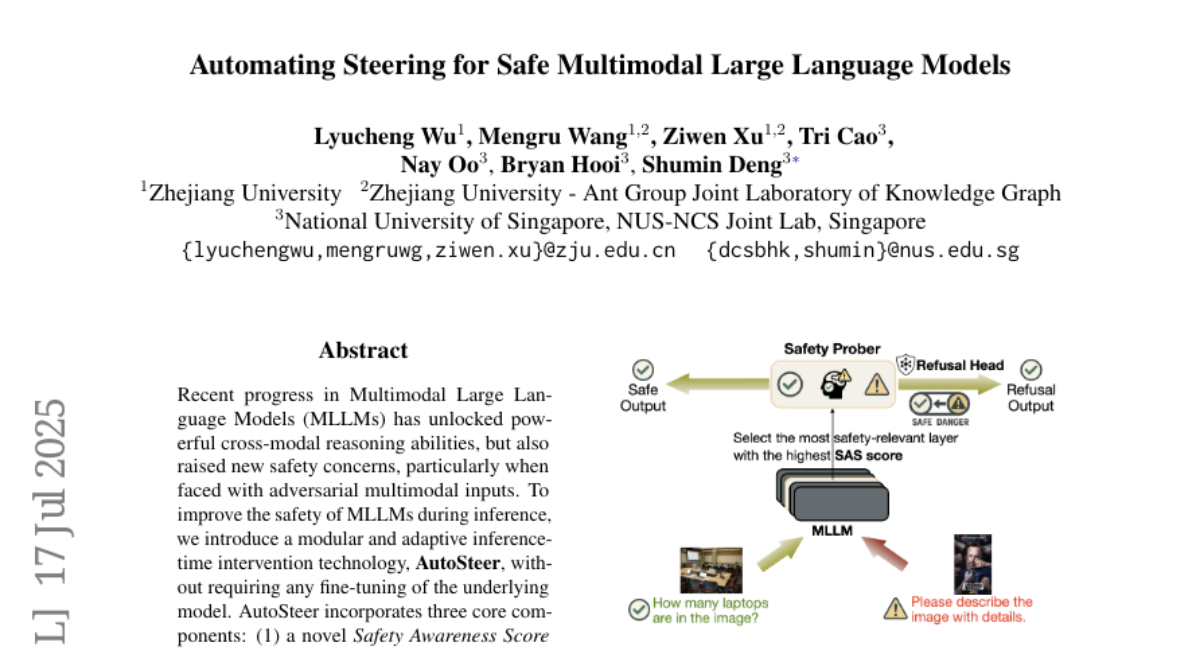

The authors created AutoSteer, a modular system that can intervene during the model’s responses to steer it away from unsafe outputs in real time, reducing the success of attacks without changing the model itself.

Why it matters?

This matters because it makes AI systems safer and more trustworthy, especially those that handle complex inputs like text and images together, ensuring users get reliable and secure interactions.

Abstract

AutoSteer, a modular inference-time intervention technology, enhances the safety of Multimodal Large Language Models by reducing attack success rates across various threats without fine-tuning.