Autoregressive Image Generation with Randomized Parallel Decoding

Haopeng Li, Jinyue Yang, Guoqi Li, Huan Wang

2025-03-14

Summary

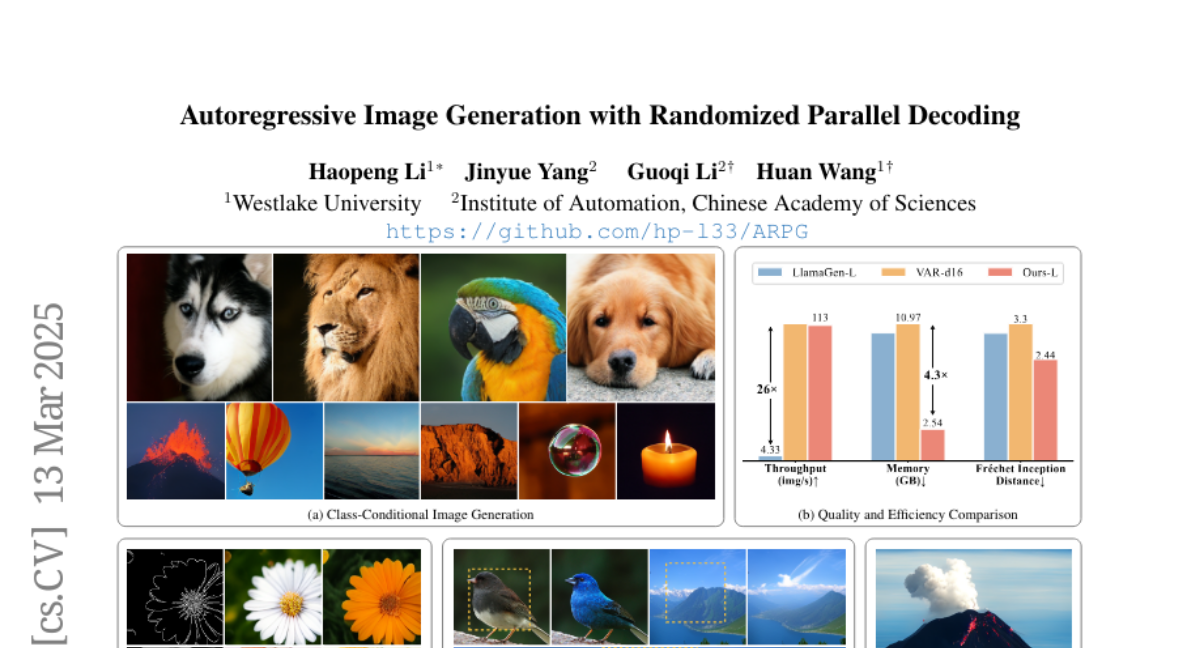

This paper introduces ARPG, a new type of image generation model that can create images faster and with more flexibility than previous methods.

What's the problem?

Traditional image generation models create images pixel by pixel in a specific order, which is slow and limits their ability to adapt to different tasks like filling in missing parts of an image.

What's the solution?

ARPG uses a new approach where it can generate different parts of the image at the same time in a random order. It uses a 'guided decoding framework' to determine where to place each part, allowing for more flexible and efficient image creation.

Why it matters?

This work matters because it significantly speeds up image generation, reduces memory usage, and allows the model to perform tasks like image inpainting and resolution expansion more effectively.

Abstract

We introduce ARPG, a novel visual autoregressive model that enables randomized parallel generation, addressing the inherent limitations of conventional raster-order approaches, which hinder inference efficiency and zero-shot generalization due to their sequential, predefined token generation order. Our key insight is that effective random-order modeling necessitates explicit guidance for determining the position of the next predicted token. To this end, we propose a novel guided decoding framework that decouples positional guidance from content representation, encoding them separately as queries and key-value pairs. By directly incorporating this guidance into the causal attention mechanism, our approach enables fully random-order training and generation, eliminating the need for bidirectional attention. Consequently, ARPG readily generalizes to zero-shot tasks such as image inpainting, outpainting, and resolution expansion. Furthermore, it supports parallel inference by concurrently processing multiple queries using a shared KV cache. On the ImageNet-1K 256 benchmark, our approach attains an FID of 1.94 with only 64 sampling steps, achieving over a 20-fold increase in throughput while reducing memory consumption by over 75% compared to representative recent autoregressive models at a similar scale.