Autoregressive Video Generation without Vector Quantization

Haoge Deng, Ting Pan, Haiwen Diao, Zhengxiong Luo, Yufeng Cui, Huchuan Lu, Shiguang Shan, Yonggang Qi, Xinlong Wang

2024-12-19

Summary

This paper talks about a new method for generating videos called NOVA, which allows for creating videos efficiently without needing to simplify the video data into smaller parts (vector quantization).

What's the problem?

Generating videos using AI can be slow and inefficient, especially when models have to break down video data into smaller pieces to understand and create new content. Traditional methods often struggle with maintaining quality and speed, making it hard to produce good results quickly.

What's the solution?

The authors propose NOVA, which treats video generation as a process of predicting frames one at a time while also considering the overall structure of the video. Instead of breaking down the video into fixed-length segments, NOVA maintains a continuous flow of information, allowing it to generate high-quality videos more efficiently. The results show that NOVA works faster and produces better-looking videos compared to previous methods, even with a smaller model size.

Why it matters?

This research is important because it improves how we can create videos using AI, making the process faster and more effective. By enhancing video generation capabilities, NOVA can be useful in various applications like filmmaking, gaming, and virtual reality, where high-quality video content is essential.

Abstract

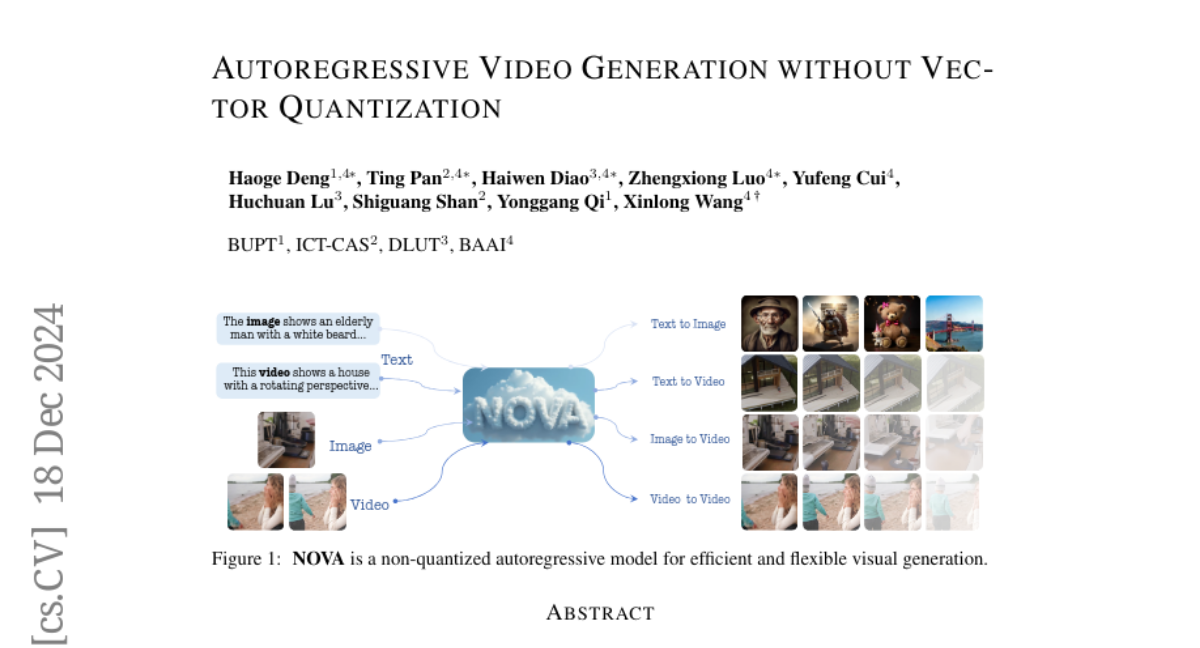

This paper presents a novel approach that enables autoregressive video generation with high efficiency. We propose to reformulate the video generation problem as a non-quantized autoregressive modeling of temporal frame-by-frame prediction and spatial set-by-set prediction. Unlike raster-scan prediction in prior autoregressive models or joint distribution modeling of fixed-length tokens in diffusion models, our approach maintains the causal property of GPT-style models for flexible in-context capabilities, while leveraging bidirectional modeling within individual frames for efficiency. With the proposed approach, we train a novel video autoregressive model without vector quantization, termed NOVA. Our results demonstrate that NOVA surpasses prior autoregressive video models in data efficiency, inference speed, visual fidelity, and video fluency, even with a much smaller model capacity, i.e., 0.6B parameters. NOVA also outperforms state-of-the-art image diffusion models in text-to-image generation tasks, with a significantly lower training cost. Additionally, NOVA generalizes well across extended video durations and enables diverse zero-shot applications in one unified model. Code and models are publicly available at https://github.com/baaivision/NOVA.