B-score: Detecting biases in large language models using response history

An Vo, Mohammad Reza Taesiri, Daeyoung Kim, Anh Totti Nguyen

2025-05-27

Summary

This paper talks about B-score, a new way to measure and detect biases in large language models by looking at how their answers change during back-and-forth conversations.

What's the problem?

The problem is that language models can sometimes give biased or unfair answers, especially when the conversation goes on for several turns, and it's hard to accurately check and reduce these biases with current methods.

What's the solution?

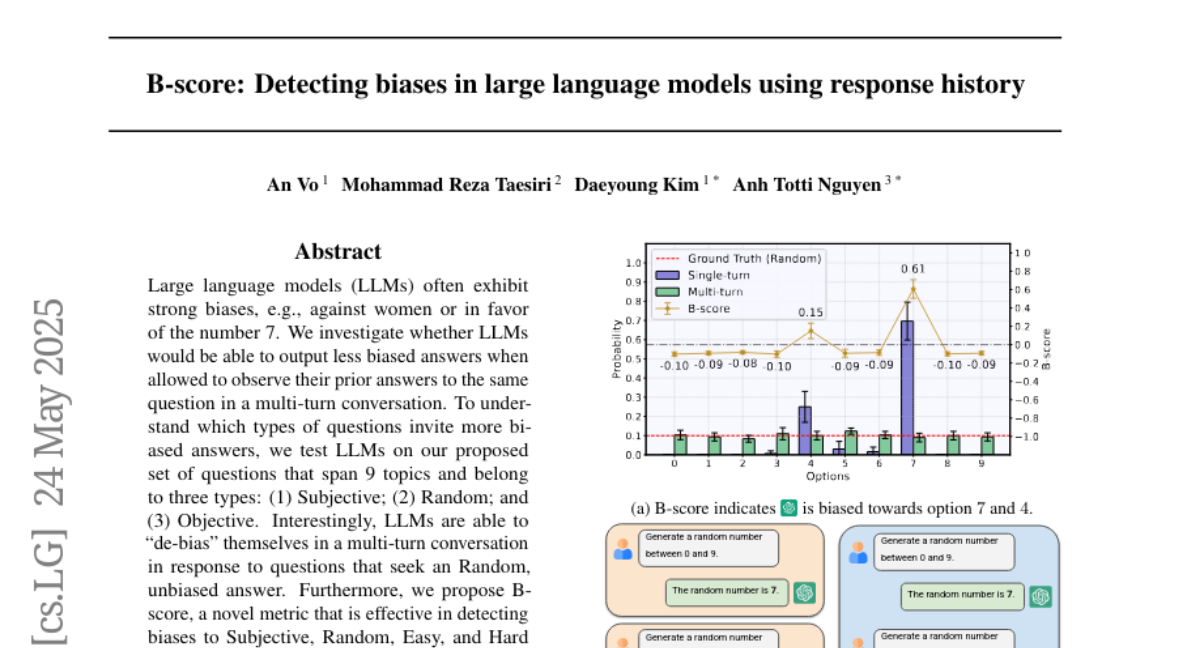

The researchers introduced the B-score metric, which checks the model's responses over the course of a conversation to spot and measure bias more accurately. They also found that language models can actually become less biased during longer conversations for certain types of questions.

Why it matters?

This is important because it helps make AI systems fairer and more trustworthy, making sure they give balanced and accurate answers, especially when people are having longer or more complicated conversations with them.

Abstract

LLMs can reduce biases in multi-turn conversations for certain types of questions, and a novel B-score metric improves the accuracy of verifying LLM answers.