Being-0: A Humanoid Robotic Agent with Vision-Language Models and Modular Skills

Haoqi Yuan, Yu Bai, Yuhui Fu, Bohan Zhou, Yicheng Feng, Xinrun Xu, Yi Zhan, Börje F. Karlsson, Zongqing Lu

2025-03-18

Summary

This paper introduces Being-0, a humanoid robot system that combines advanced AI with a library of basic skills to perform complex tasks in real-world environments.

What's the problem?

It's difficult to create robots that can reliably and efficiently perform complex tasks because combining high-level AI planning with low-level robot control often leads to errors and delays.

What's the solution?

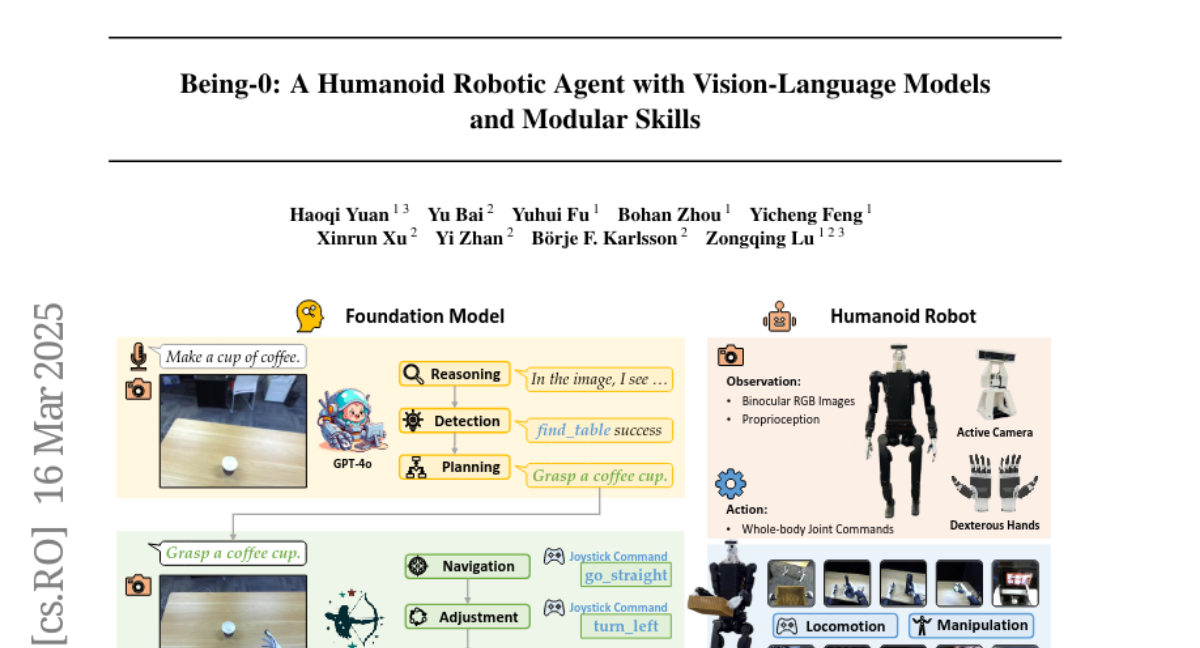

Being-0 uses a hierarchical system where a high-level AI model handles planning and reasoning, while a skill library provides stable movement and precise manipulation. A special 'Connector' module, powered by a lightweight vision-language model, translates the AI's plans into actionable commands for the robot's skills, coordinating movement and manipulation.

Why it matters?

This work matters because it demonstrates a practical way to build humanoid robots that can perform complex tasks in real time, using relatively low-cost hardware, opening the door for more capable and accessible robots.

Abstract

Building autonomous robotic agents capable of achieving human-level performance in real-world embodied tasks is an ultimate goal in humanoid robot research. Recent advances have made significant progress in high-level cognition with Foundation Models (FMs) and low-level skill development for humanoid robots. However, directly combining these components often results in poor robustness and efficiency due to compounding errors in long-horizon tasks and the varied latency of different modules. We introduce Being-0, a hierarchical agent framework that integrates an FM with a modular skill library. The FM handles high-level cognitive tasks such as instruction understanding, task planning, and reasoning, while the skill library provides stable locomotion and dexterous manipulation for low-level control. To bridge the gap between these levels, we propose a novel Connector module, powered by a lightweight vision-language model (VLM). The Connector enhances the FM's embodied capabilities by translating language-based plans into actionable skill commands and dynamically coordinating locomotion and manipulation to improve task success. With all components, except the FM, deployable on low-cost onboard computation devices, Being-0 achieves efficient, real-time performance on a full-sized humanoid robot equipped with dexterous hands and active vision. Extensive experiments in large indoor environments demonstrate Being-0's effectiveness in solving complex, long-horizon tasks that require challenging navigation and manipulation subtasks. For further details and videos, visit https://beingbeyond.github.io/being-0.