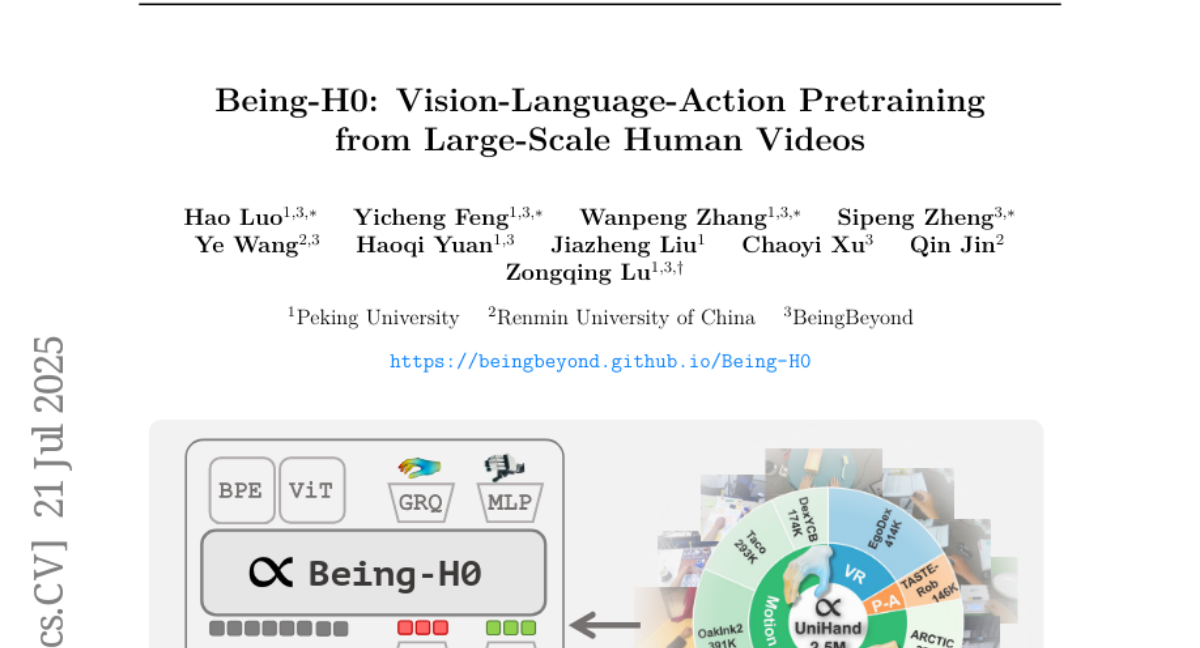

Being-H0: Vision-Language-Action Pretraining from Large-Scale Human Videos

Hao Luo, Yicheng Feng, Wanpeng Zhang, Sipeng Zheng, Ye Wang, Haoqi Yuan, Jiazheng Liu, Chaoyi Xu, Qin Jin, Zongqing Lu

2025-07-22

Summary

This paper talks about Being-H0, an AI model that learns how to understand vision, language, and actions together by studying videos of humans and following physical instructions.

What's the problem?

The problem is that teaching AI to perform complex physical tasks, like manipulating objects with precision, is difficult because it needs to understand both what it sees and the instructions it gets, which requires a lot of training data and good learning methods.

What's the solution?

The authors trained Being-H0 using a large collection of human videos showing detailed movements and combined this with instruction tuning, where the AI learns to follow physical commands. This approach helps the model become very skilled at manipulating objects and works well even as the data and model size grow.

Why it matters?

This matters because it helps create AI systems that can perform complicated tasks in the real world with human-like skill, which is useful for robotics and automation in many industries.

Abstract

Being-H0, a Vision-Language-Action model, uses human videos and physical instruction tuning to achieve high dexterity in manipulation tasks and scales well with data and model size.