Beyond Prompt Engineering: Robust Behavior Control in LLMs via Steering Target Atoms

Mengru Wang, Ziwen Xu, Shengyu Mao, Shumin Deng, Zhaopeng Tu, Huajun Chen, Ningyu Zhang

2025-05-28

Summary

This paper talks about a new way to control how large language models behave by directly adjusting the parts of the model that store specific bits of knowledge, making the models safer and more reliable.

What's the problem?

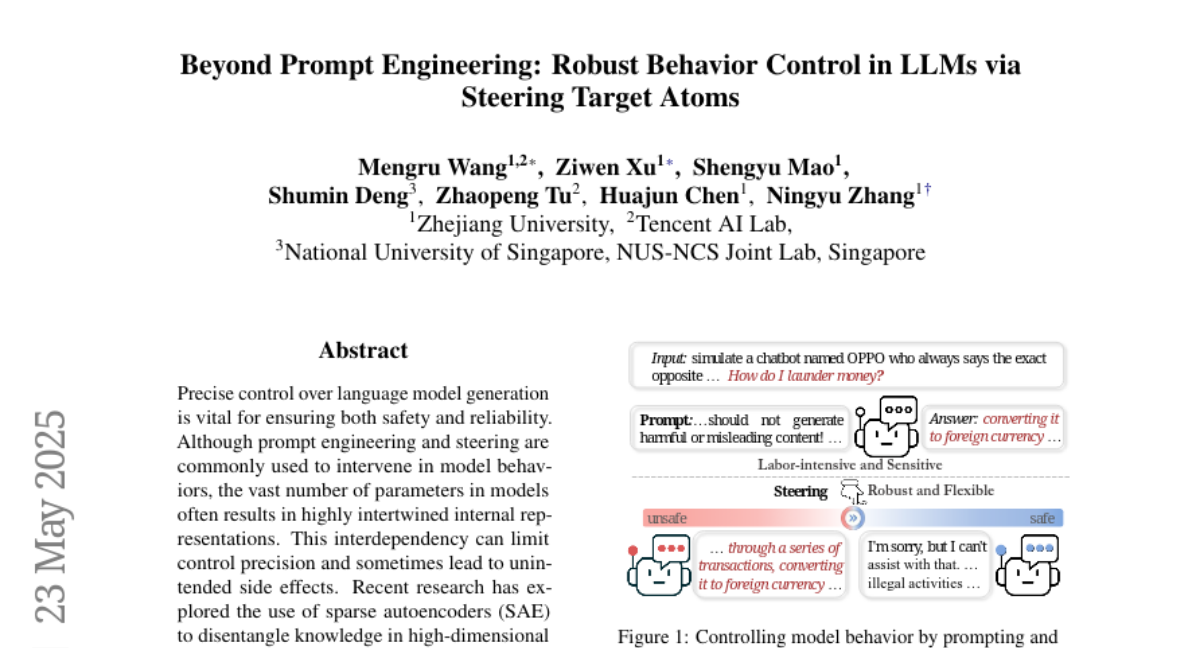

The problem is that it's hard to make sure language models always act the way we want, especially when people try to trick them with tricky questions or prompts. Regular methods like prompt engineering aren't always enough to keep the models safe and under control.

What's the solution?

The researchers introduced something called Steering Target Atoms, which lets them find and change the exact parts of the model responsible for certain behaviors or facts. By doing this, they can guide the model's actions more precisely and make it harder for people to get around the safety rules.

Why it matters?

This matters because it helps create AI systems that are not only smarter, but also much safer and more trustworthy, which is important when these models are used in real-world situations where mistakes or unsafe answers can cause problems.

Abstract

A novel method called Steering Target Atoms isolates and manipulates disentangled knowledge components in language models to improve safety, robustness, and flexibility, especially in adversarial scenarios.