BlobCtrl: A Unified and Flexible Framework for Element-level Image Generation and Editing

Yaowei Li, Lingen Li, Zhaoyang Zhang, Xiaoyu Li, Guangzhi Wang, Hongxiang Li, Xiaodong Cun, Ying Shan, Yuexian Zou

2025-03-18

Summary

This collection of research paper titles explores recent advances and challenges in AI, covering areas like image and video generation, language models, robotics, and multimodal learning.

What's the problem?

The problems addressed include enhancing the quality, efficiency, and control of AI-generated content, improving the reasoning abilities of AI models, mitigating biases and safety risks, enabling AI to better interact with the real world, and creating more personalized and versatile AI systems.

What's the solution?

The solutions involve developing new models, training techniques, benchmarks, and evaluation methods. These include innovations in diffusion models, transformers, reinforcement learning, and multimodal learning. Specific solutions focus on improving image editing (Edit Transfer), generating consistent videos (CINEMA, Long Context Tuning), enabling robots to navigate and manipulate objects (UniGoal, adversarial data collection), adding speech to text-based models (From TOWER to SPIRE), and making AI models more fair (Group-robust Machine Unlearning).

Why it matters?

These advancements are important because they push the boundaries of AI capabilities, making AI more powerful, reliable, and beneficial for various applications. They also address critical challenges related to safety, fairness, and transparency, ensuring that AI is developed and deployed responsibly.

Abstract

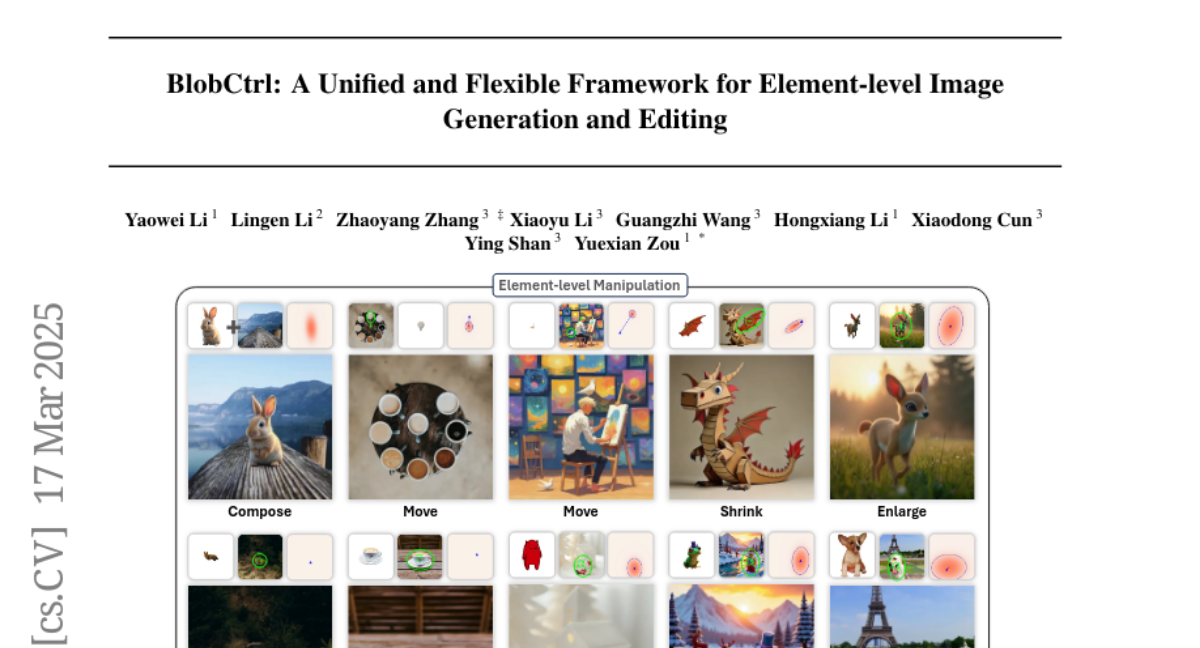

Element-level visual manipulation is essential in digital content creation, but current diffusion-based methods lack the precision and flexibility of traditional tools. In this work, we introduce BlobCtrl, a framework that unifies element-level generation and editing using a probabilistic blob-based representation. By employing blobs as visual primitives, our approach effectively decouples and represents spatial location, semantic content, and identity information, enabling precise element-level manipulation. Our key contributions include: 1) a dual-branch diffusion architecture with hierarchical feature fusion for seamless foreground-background integration; 2) a self-supervised training paradigm with tailored data augmentation and score functions; and 3) controllable dropout strategies to balance fidelity and diversity. To support further research, we introduce BlobData for large-scale training and BlobBench for systematic evaluation. Experiments show that BlobCtrl excels in various element-level manipulation tasks while maintaining computational efficiency, offering a practical solution for precise and flexible visual content creation. Project page: https://liyaowei-stu.github.io/project/BlobCtrl/