Bridging the Gap: Studio-like Avatar Creation from a Monocular Phone Capture

ShahRukh Athar, Shunsuke Saito, Zhengyu Yang, Stanislav Pidhorsky, Chen Cao

2024-07-30

Summary

This paper discusses a new method for creating high-quality, realistic avatars from simple phone captures, making it easier to generate lifelike 3D representations without the need for expensive studio equipment.

What's the problem?

Creating realistic 3D avatars usually requires complex and costly setups, like specialized studio equipment. While recent technologies allow for quick phone scans to create 3D avatars, these methods often produce lower quality images that lack detail, especially in areas like facial features and lighting. This results in avatars that do not look as good as those made in professional studios.

What's the solution?

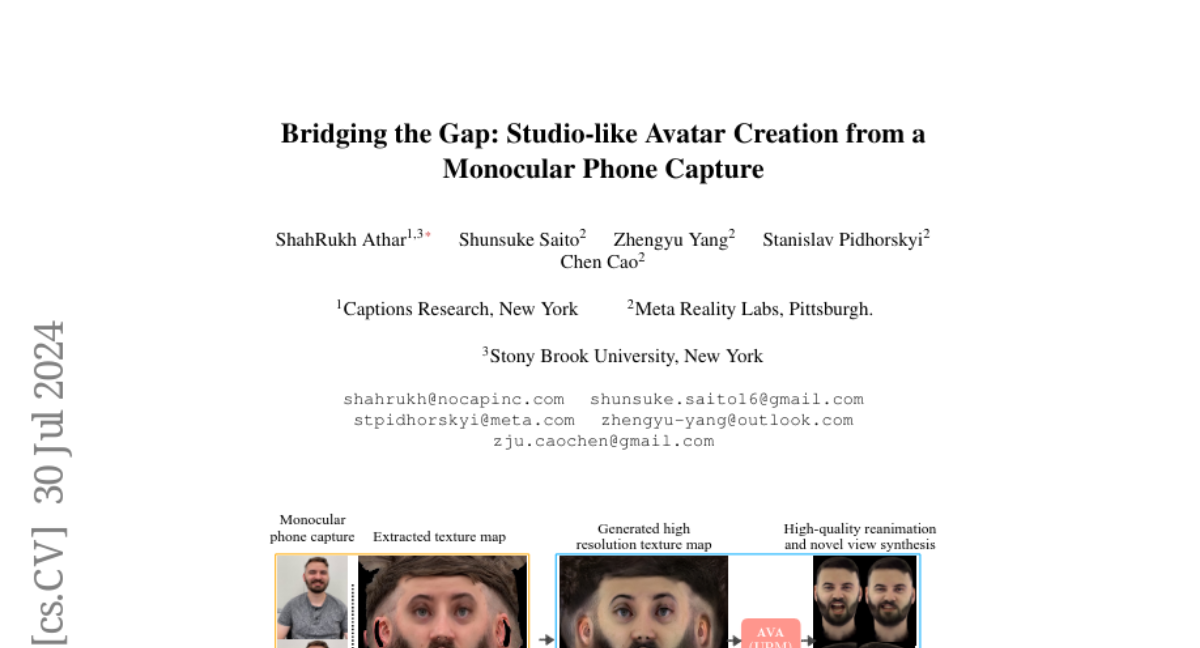

To overcome these challenges, the authors propose a method called 'Bridging the Gap,' which generates high-quality texture maps from short videos taken with a regular smartphone. They use a technique that involves a StyleGAN2 model to improve the textures and details of the avatars. By training this model with data from professional studio captures, they can create more realistic and complete avatars from casual phone videos. The method enhances facial details and ensures consistent lighting across the avatar.

Why it matters?

This research is important because it democratizes the creation of realistic avatars, allowing more people to generate high-quality 3D representations without needing access to expensive technology. This could have applications in gaming, virtual reality, and social media, where personalized avatars are increasingly popular.

Abstract

Creating photorealistic avatars for individuals traditionally involves extensive capture sessions with complex and expensive studio devices like the LightStage system. While recent strides in neural representations have enabled the generation of photorealistic and animatable 3D avatars from quick phone scans, they have the capture-time lighting baked-in, lack facial details and have missing regions in areas such as the back of the ears. Thus, they lag in quality compared to studio-captured avatars. In this paper, we propose a method that bridges this gap by generating studio-like illuminated texture maps from short, monocular phone captures. We do this by parameterizing the phone texture maps using the W^+ space of a StyleGAN2, enabling near-perfect reconstruction. Then, we finetune a StyleGAN2 by sampling in the W^+ parameterized space using a very small set of studio-captured textures as an adversarial training signal. To further enhance the realism and accuracy of facial details, we super-resolve the output of the StyleGAN2 using carefully designed diffusion model that is guided by image gradients of the phone-captured texture map. Once trained, our method excels at producing studio-like facial texture maps from casual monocular smartphone videos. Demonstrating its capabilities, we showcase the generation of photorealistic, uniformly lit, complete avatars from monocular phone captures. http://shahrukhathar.github.io/2024/07/22/Bridging.html{The project page can be found here.}