Build-A-Scene: Interactive 3D Layout Control for Diffusion-Based Image Generation

Abdelrahman Eldesokey, Peter Wonka

2024-08-28

Summary

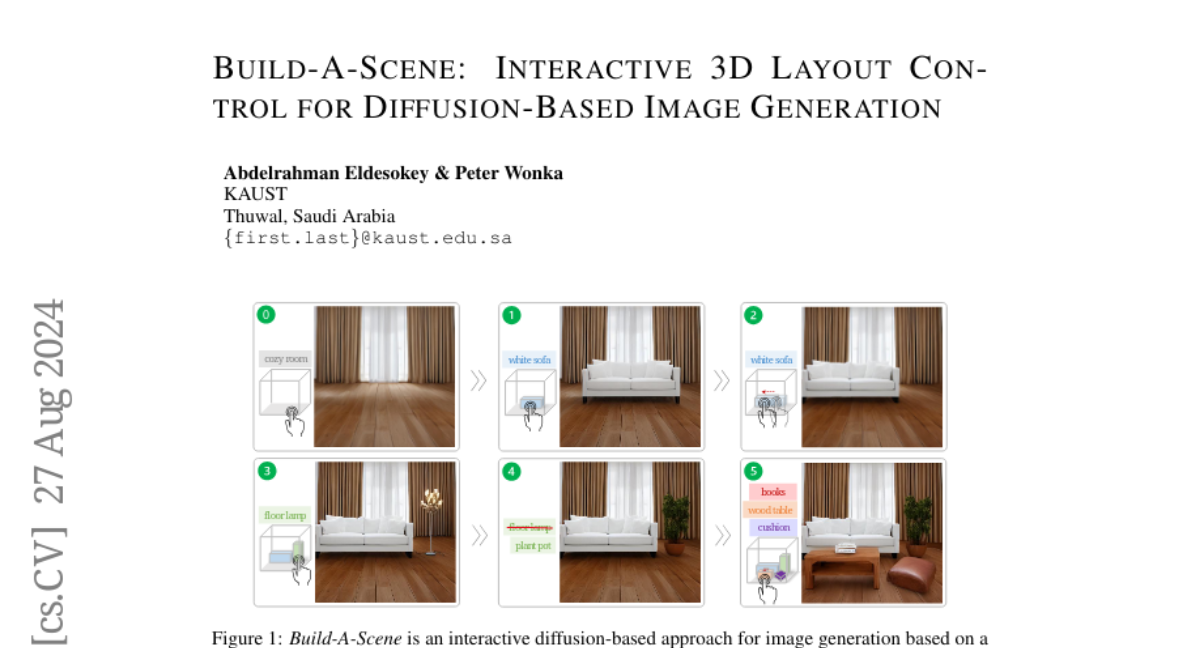

This paper presents Build-A-Scene, a new method for generating images from text descriptions that allows users to interactively control the 3D layout of objects in a scene.

What's the problem?

Current methods for generating images from text descriptions often work only in 2D and require users to provide a fixed layout beforehand. This limits flexibility and does not allow for easy adjustments or 3D object control, which is important for applications like interior design and complex scene creation.

What's the solution?

The authors introduce a diffusion-based approach that uses 3D boxes instead of 2D ones for layout control. Their method allows users to insert, move, and change objects in a scene while keeping track of previous changes. This is achieved through a new technique called Dynamic Self-Attention (DSA) and a strategy for consistently translating 3D objects. Their experiments show that this approach significantly improves the success rate of generating objects compared to traditional methods.

Why it matters?

This research matters because it enhances the way we can create and manipulate visual scenes using AI. By allowing interactive control over 3D layouts, Build-A-Scene can be particularly useful in fields like gaming, virtual reality, and design, making it easier for users to visualize and create complex environments.

Abstract

We propose a diffusion-based approach for Text-to-Image (T2I) generation with interactive 3D layout control. Layout control has been widely studied to alleviate the shortcomings of T2I diffusion models in understanding objects' placement and relationships from text descriptions. Nevertheless, existing approaches for layout control are limited to 2D layouts, require the user to provide a static layout beforehand, and fail to preserve generated images under layout changes. This makes these approaches unsuitable for applications that require 3D object-wise control and iterative refinements, e.g., interior design and complex scene generation. To this end, we leverage the recent advancements in depth-conditioned T2I models and propose a novel approach for interactive 3D layout control. We replace the traditional 2D boxes used in layout control with 3D boxes. Furthermore, we revamp the T2I task as a multi-stage generation process, where at each stage, the user can insert, change, and move an object in 3D while preserving objects from earlier stages. We achieve this through our proposed Dynamic Self-Attention (DSA) module and the consistent 3D object translation strategy. Experiments show that our approach can generate complicated scenes based on 3D layouts, boosting the object generation success rate over the standard depth-conditioned T2I methods by 2x. Moreover, it outperforms other methods in comparison in preserving objects under layout changes. Project Page: https://abdo-eldesokey.github.io/build-a-scene/