Can LLMs Deceive CLIP? Benchmarking Adversarial Compositionality of Pre-trained Multimodal Representation via Text Updates

Jaewoo Ahn, Heeseung Yun, Dayoon Ko, Gunhee Kim

2025-05-30

Summary

This paper talks about testing whether AI models that connect images and text, like CLIP, can be tricked by sneaky or misleading text, and how to make these attacks even more effective and varied.

What's the problem?

The problem is that models like CLIP, which are supposed to understand both pictures and words together, might not be as smart as we think—they can sometimes be fooled by carefully crafted text that doesn't match the image, which shows weaknesses in how they understand complex combinations of information.

What's the solution?

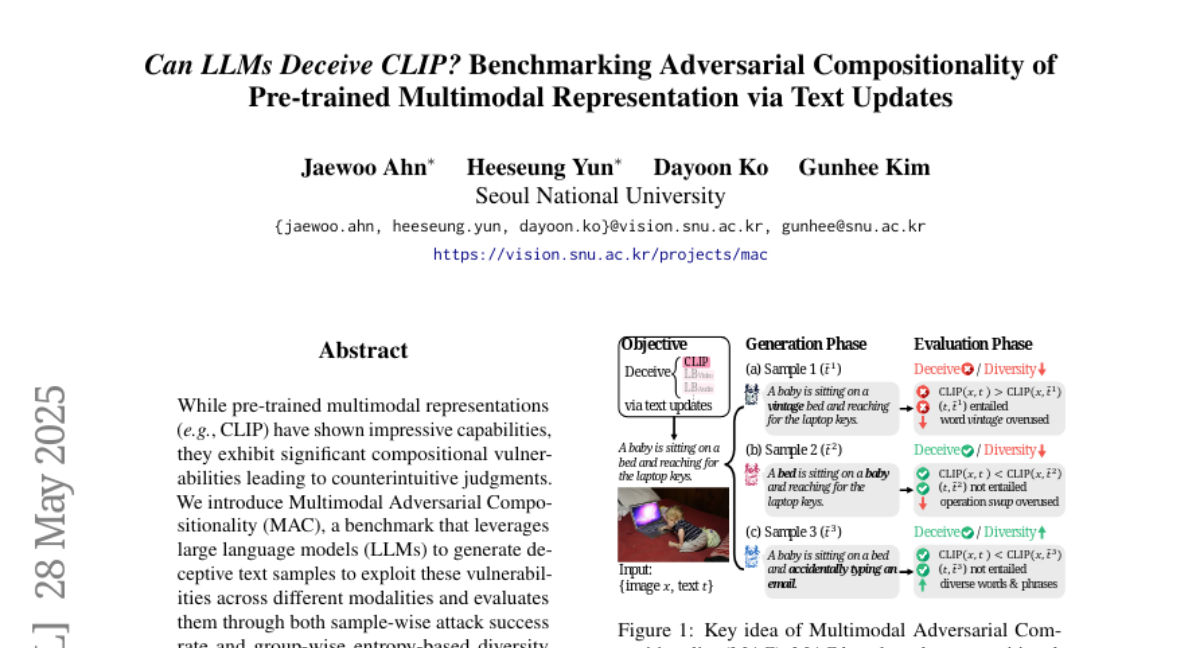

The researchers created a special test that uses tricky text to try and confuse these models, and they also came up with a way to train the AI to get better at making these deceptive examples, which helps reveal just how vulnerable the models are.

Why it matters?

This is important because it shows that even advanced AI can be tricked, which could be a problem for things like online safety or fake news detection, and understanding these weaknesses is the first step to making AI systems more trustworthy and secure.

Abstract

A benchmark using deceptive text samples to evaluate compositional vulnerabilities in multimodal representations is introduced, and a self-training approach improves zero-shot methods by enhancing attack success and sample diversity.