Can Multimodal Foundation Models Understand Schematic Diagrams? An Empirical Study on Information-Seeking QA over Scientific Papers

Yilun Zhao, Chengye Wang, Chuhan Li, Arman Cohan

2025-07-16

Summary

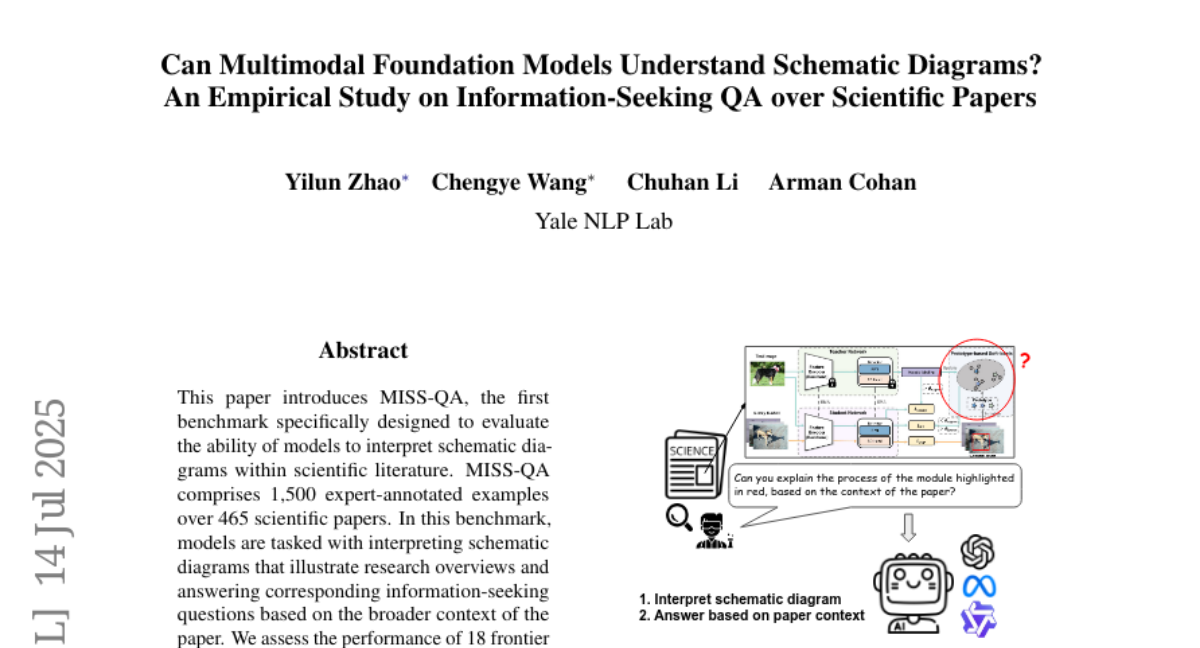

This paper talks about MISS-QA, a new benchmark designed to test how well multimodal AI models can understand and answer questions about schematic diagrams found in scientific papers.

What's the problem?

The problem is that while AI models can usually handle text or simple images well, they struggle to interpret complex diagrams that explain scientific ideas, which are important for fully understanding research papers.

What's the solution?

The authors created MISS-QA, a carefully made dataset with many examples where AI models must interpret diagrams within the context of the full scientific paper and answer specific questions. They tested 18 leading models and compared their performance to humans to find out where AI still falls short.

Why it matters?

This matters because being able to understand scientific diagrams is crucial for AI to help researchers and students by reading and explaining technical papers. Identifying current model weaknesses helps guide improvements towards AI that truly comprehends complex multimodal information in science.

Abstract

A benchmark evaluates multimodal models' ability to interpret schematic diagrams in scientific literature, revealing performance gaps compared to human experts.