CAPTURe: Evaluating Spatial Reasoning in Vision Language Models via Occluded Object Counting

Atin Pothiraj, Elias Stengel-Eskin, Jaemin Cho, Mohit Bansal

2025-04-23

Summary

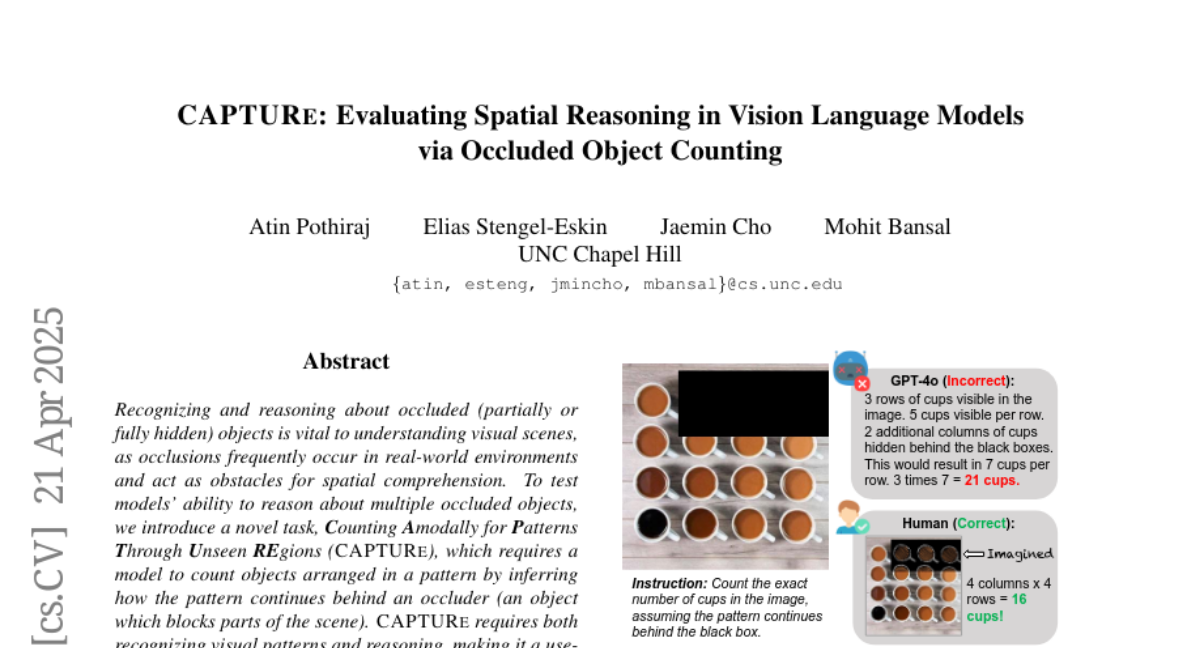

This paper talks about CAPTURe, a new test designed to see how well AI models that understand both pictures and words can count objects, especially when some of those objects are hidden or blocked from view.

What's the problem?

The problem is that even though vision-language models are getting better at recognizing and describing things in images, they still have a hard time figuring out how many objects are present when some are partially covered or hidden, something humans are usually pretty good at.

What's the solution?

The researchers created the CAPTURe task, which challenges these AI models by giving them images where objects overlap or are blocked by other things, and then asks the models to count how many objects are really there. This test helps measure how well these models can reason about space and hidden things.

Why it matters?

This is important because it shows that current AI models still have a lot to learn when it comes to understanding complex visual scenes the way people do. By identifying these weaknesses, researchers can work on improving AI so it can be more helpful and accurate in real-world situations where not everything is clearly visible.

Abstract

A novel task, CAPTURe, is introduced to evaluate vision-language models' ability to count objects in patterns with occlusions, revealing that models struggle significantly compared to humans.