Case2Code: Learning Inductive Reasoning with Synthetic Data

Yunfan Shao, Linyang Li, Yichuan Ma, Peiji Li, Demin Song, Qinyuan Cheng, Shimin Li, Xiaonan Li, Pengyu Wang, Qipeng Guo, Hang Yan, Xipeng Qiu, Xuanjing Huang, Dahua Lin

2024-07-18

Summary

This paper introduces Case2Code, a new approach to help large language models (LLMs) learn inductive reasoning using synthetic data from code examples.

What's the problem?

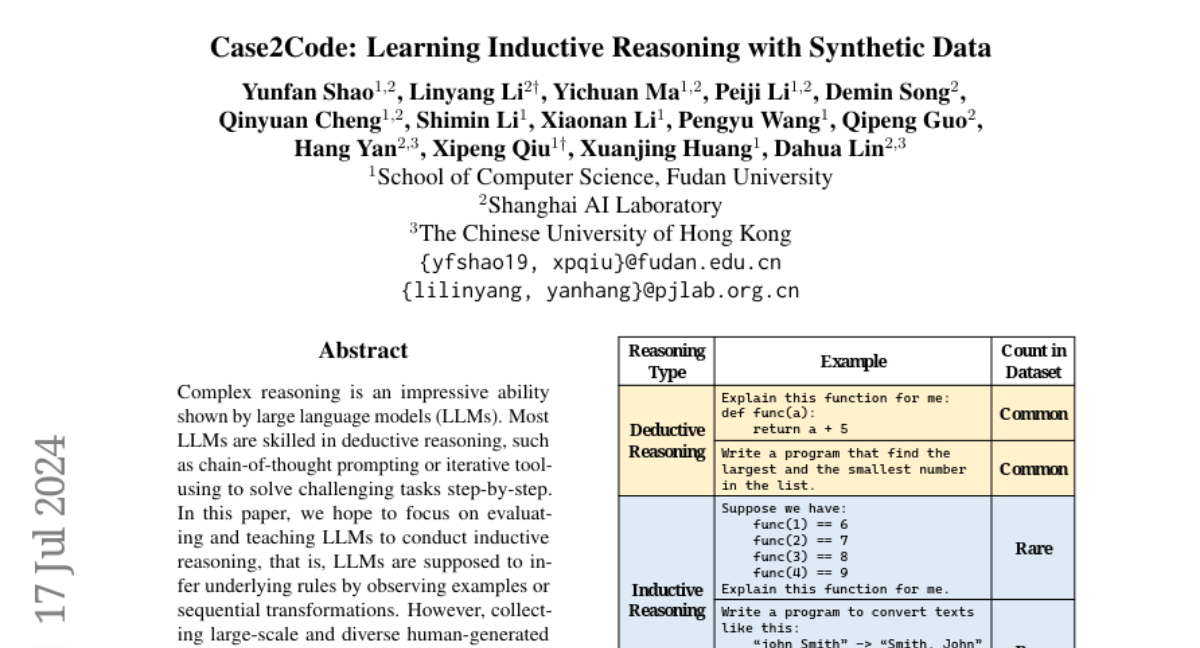

While LLMs are good at deductive reasoning (solving problems step-by-step), they struggle with inductive reasoning, which involves figuring out general rules from specific examples. Collecting enough real-world examples for training LLMs in inductive reasoning is difficult and time-consuming, making it hard to improve their performance in this area.

What's the solution?

To tackle this issue, the authors created the Case2Code task, which uses synthetic data generated from executable programs. They designed a dataset that includes various input-output pairs from these programs, allowing LLMs to learn how to infer the underlying code implementations based on these examples. The researchers first evaluated existing LLMs on this task and then trained them using the synthetic data to improve their inductive reasoning skills. The results showed that this approach not only enhanced the LLMs' performance on the Case2Code task but also improved their overall coding abilities.

Why it matters?

This research is significant because it provides a new way to train LLMs in inductive reasoning, which is essential for tasks like programming and problem-solving. By using synthetic data, the authors demonstrate that it's possible to enhance AI capabilities without needing extensive real-world data, paving the way for more advanced and versatile language models that can better understand and generate code.

Abstract

Complex reasoning is an impressive ability shown by large language models (LLMs). Most LLMs are skilled in deductive reasoning, such as chain-of-thought prompting or iterative tool-using to solve challenging tasks step-by-step. In this paper, we hope to focus on evaluating and teaching LLMs to conduct inductive reasoning, that is, LLMs are supposed to infer underlying rules by observing examples or sequential transformations. However, collecting large-scale and diverse human-generated inductive data is challenging. We focus on data synthesis in the code domain and propose a Case2Code task by exploiting the expressiveness and correctness of programs. Specifically, we collect a diverse set of executable programs, synthesize input-output transformations for each program, and force LLMs to infer the underlying code implementations based on the synthetic I/O cases. We first evaluate representative LLMs on the synthesized Case2Code task and demonstrate that the Case-to-code induction is challenging for LLMs. Then, we synthesize large-scale Case2Code training samples to train LLMs to perform inductive reasoning. Experimental results show that such induction training benefits not only in distribution Case2Code performance but also enhances various coding abilities of trained LLMs, demonstrating the great potential of learning inductive reasoning via synthetic data.