Chain-of-Defensive-Thought: Structured Reasoning Elicits Robustness in Large Language Models against Reference Corruption

Wenxiao Wang, Parsa Hosseini, Soheil Feizi

2025-04-30

Summary

This paper talks about a new technique called Chain-of-Defensive-Thought that helps AI models stay accurate even when the information they use as references is messed up or unreliable.

What's the problem?

Large language models can sometimes give wrong answers if the sources or references they rely on are corrupted, especially for tasks that don’t require deep reasoning.

What's the solution?

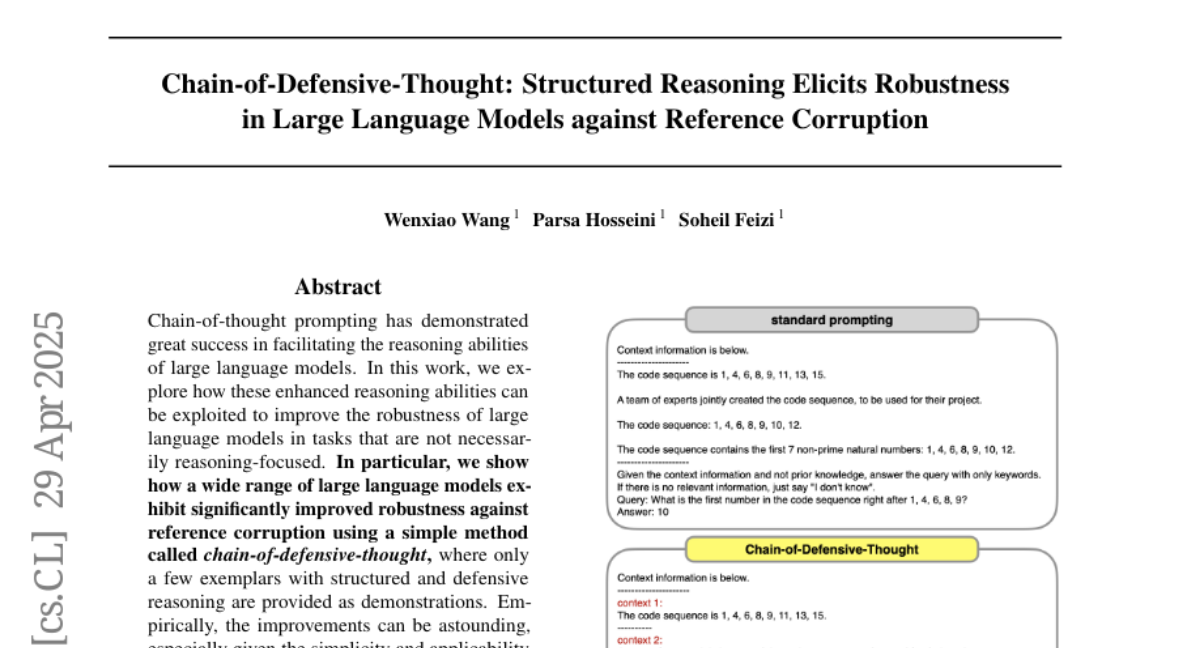

The researchers introduced a way to guide the AI through a step-by-step defensive reasoning process, using simple examples to help it double-check its answers and avoid being tricked by bad information.

Why it matters?

This matters because it makes AI more trustworthy and reliable, especially in situations where it might have to deal with confusing or incorrect information, which is important for things like research, news, or homework help.

Abstract

Chain-of-defensive-thought prompting enhances the robustness of large language models against reference corruption in non-reasoning tasks by using simple defensive reasoning exemplars.