Chapter-Llama: Efficient Chaptering in Hour-Long Videos with LLMs

Lucas Ventura, Antoine Yang, Cordelia Schmid, Gül Varol

2025-04-02

Summary

This paper is about a way to automatically divide long videos into chapters with titles, making it easier to find what you're looking for.

What's the problem?

Long videos can be hard to navigate because they lack clear divisions and titles.

What's the solution?

The researchers created 'Chapter-Llama,' which uses AI to analyze the video's speech and captions and automatically create chapters with relevant titles.

Why it matters?

This work matters because it can greatly improve the viewing experience for long videos by making it easier to find specific content.

Abstract

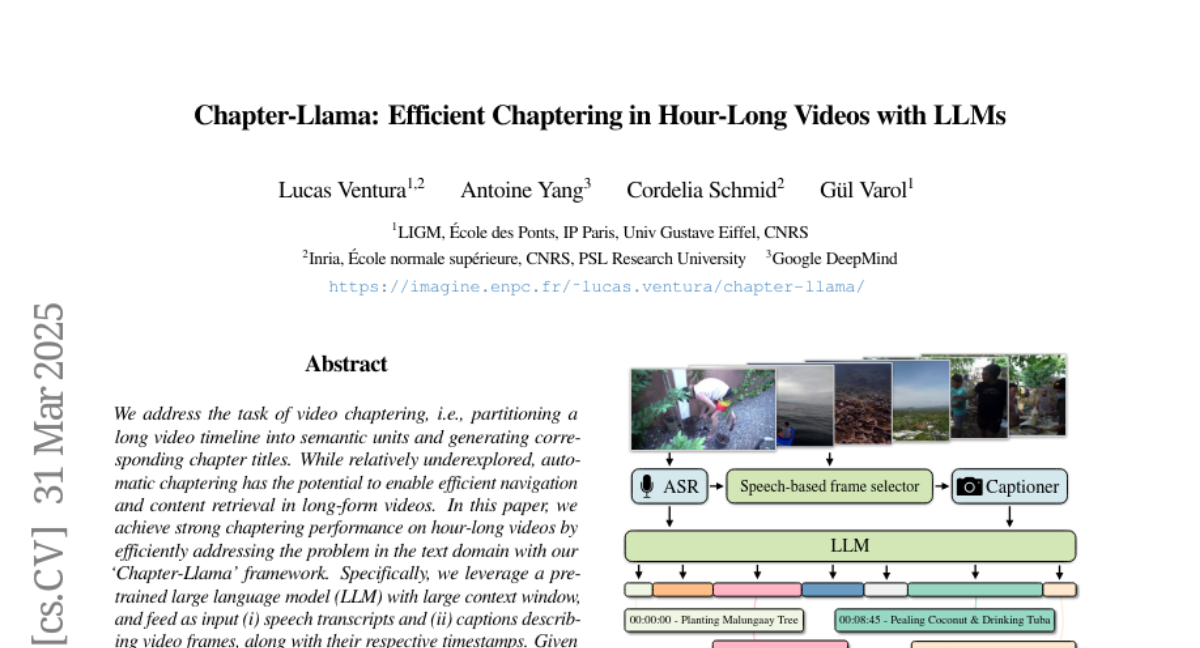

We address the task of video chaptering, i.e., partitioning a long video timeline into semantic units and generating corresponding chapter titles. While relatively underexplored, automatic chaptering has the potential to enable efficient navigation and content retrieval in long-form videos. In this paper, we achieve strong chaptering performance on hour-long videos by efficiently addressing the problem in the text domain with our 'Chapter-Llama' framework. Specifically, we leverage a pretrained large language model (LLM) with large context window, and feed as input (i) speech transcripts and (ii) captions describing video frames, along with their respective timestamps. Given the inefficiency of exhaustively captioning all frames, we propose a lightweight speech-guided frame selection strategy based on speech transcript content, and experimentally demonstrate remarkable advantages. We train the LLM to output timestamps for the chapter boundaries, as well as free-form chapter titles. This simple yet powerful approach scales to processing one-hour long videos in a single forward pass. Our results demonstrate substantial improvements (e.g., 45.3 vs 26.7 F1 score) over the state of the art on the recent VidChapters-7M benchmark. To promote further research, we release our code and models at our project page.