ChARM: Character-based Act-adaptive Reward Modeling for Advanced Role-Playing Language Agents

Feiteng Fang, Ting-En Lin, Yuchuan Wu, Xiong Liu, Xiang Huang, Dingwei Chen, Jing Ye, Haonan Zhang, Liang Zhu, Hamid Alinejad-Rokny, Min Yang, Fei Huang, Yongbin Li

2025-06-02

Summary

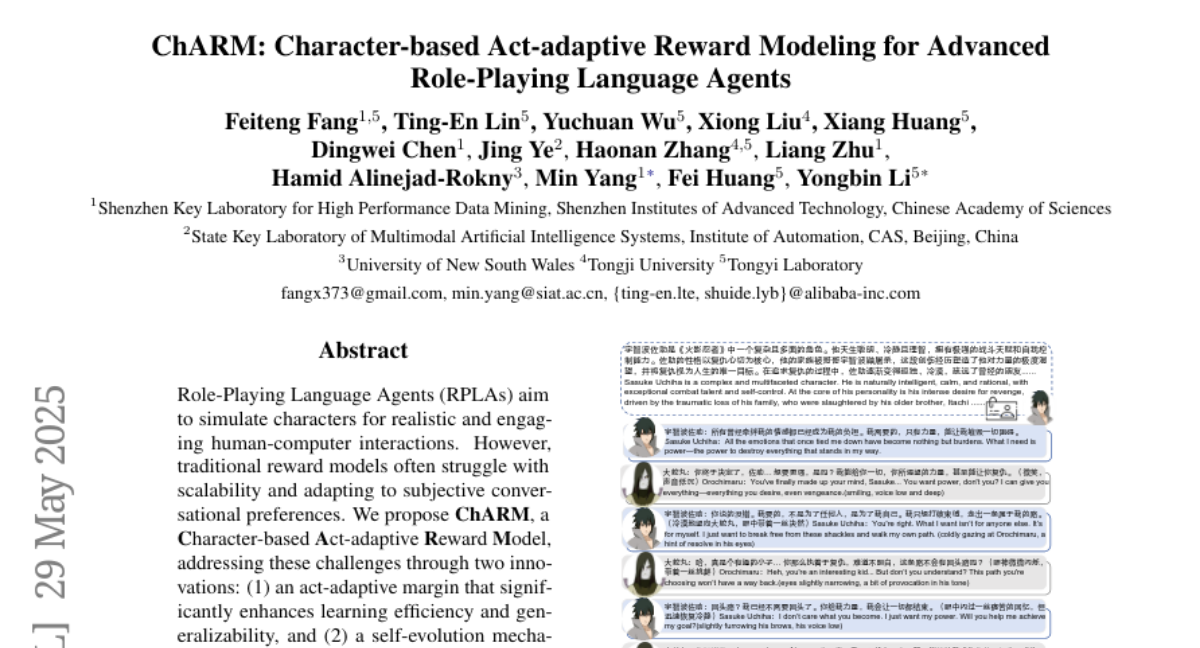

This paper talks about ChARM, a new system that helps AI models get better at acting like specific characters in role-playing situations by teaching them to learn what makes a character believable and rewarding them for staying in character.

What's the problem?

The problem is that language models often struggle to keep their responses consistent with the personality and actions of the character they're supposed to be playing, especially when they don't have a lot of labeled examples to learn from.

What's the solution?

The researchers designed ChARM to focus on character traits and adapt its rewards based on how well the AI stays true to its role. It also uses a method called self-evolution, where the model learns from its own experiences even when there aren't labeled examples, helping it improve over time.

Why it matters?

This is important because it makes AI role-playing agents much more convincing and reliable, which is useful for things like interactive games, virtual assistants, and educational tools where believable character behavior really matters.

Abstract

ChARM, a character-focused adaptive reward model, improves preference learning for role-playing language agents by using an act-adaptive margin and self-evolution with unlabeled data, achieving superior results on dedicated benchmarks.