ChartQAPro: A More Diverse and Challenging Benchmark for Chart Question Answering

Ahmed Masry, Mohammed Saidul Islam, Mahir Ahmed, Aayush Bajaj, Firoz Kabir, Aaryaman Kartha, Md Tahmid Rahman Laskar, Mizanur Rahman, Shadikur Rahman, Mehrad Shahmohammadi, Megh Thakkar, Md Rizwan Parvez, Enamul Hoque, Shafiq Joty

2025-04-18

Summary

This paper talks about ChartQAPro, a new and tougher set of tests designed to see how well AI models can understand and answer questions about charts and graphs.

What's the problem?

The problem is that most current tests for AI chart understanding are too simple or not varied enough, so they don't really show how good or bad these models are at dealing with real-world, complicated charts. This makes it hard to know if an AI is actually ready to help with things like data analysis or scientific research.

What's the solution?

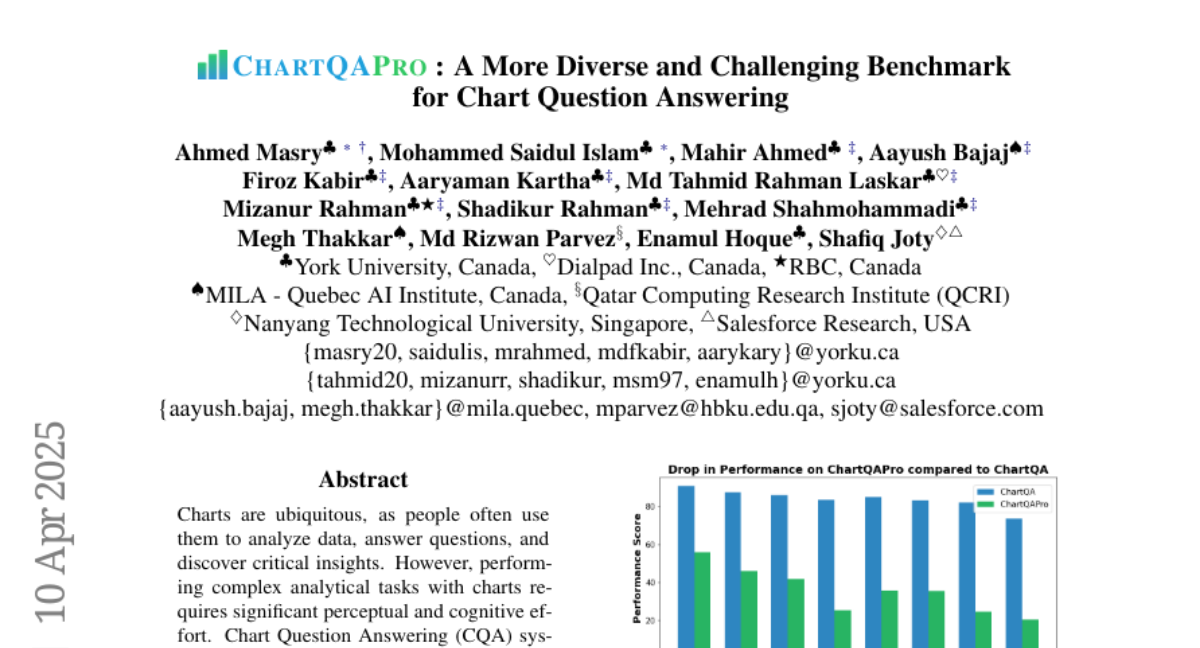

The researchers created ChartQAPro, which includes a much wider variety of charts and more challenging questions. When they tested current AI models on this new benchmark, they found that the models struggled a lot more compared to the older, easier tests, exposing clear weaknesses.

Why it matters?

This matters because it pushes AI researchers to improve their models so they can handle real-life data and help people understand complex information from charts, which is important in fields like business, science, and education.

Abstract

ChartQAPro introduces a diverse benchmark for evaluating the performance of large vision-language models in chart reasoning, revealing significant performance gaps compared to existing benchmarks.