ChatAnyone: Stylized Real-time Portrait Video Generation with Hierarchical Motion Diffusion Model

Jinwei Qi, Chaonan Ji, Sheng Xu, Peng Zhang, Bang Zhang, Liefeng Bo

2025-03-28

Summary

This paper is about making video chats more realistic and expressive by creating AI that can generate real-time videos of people with natural-looking movements and facial expressions.

What's the problem?

Current video chat technologies often struggle to create realistic and engaging experiences, especially when it comes to synchronizing head and body movements and capturing fine-grained facial expressions.

What's the solution?

The researchers developed a new AI system that uses motion diffusion models to generate diverse facial expressions and synchronize head and body movements, and they also added explicit hand control signals to generate more detailed hand movements.

Why it matters?

This work matters because it can lead to more immersive and engaging video chat experiences, making it feel more like you're talking to someone in person.

Abstract

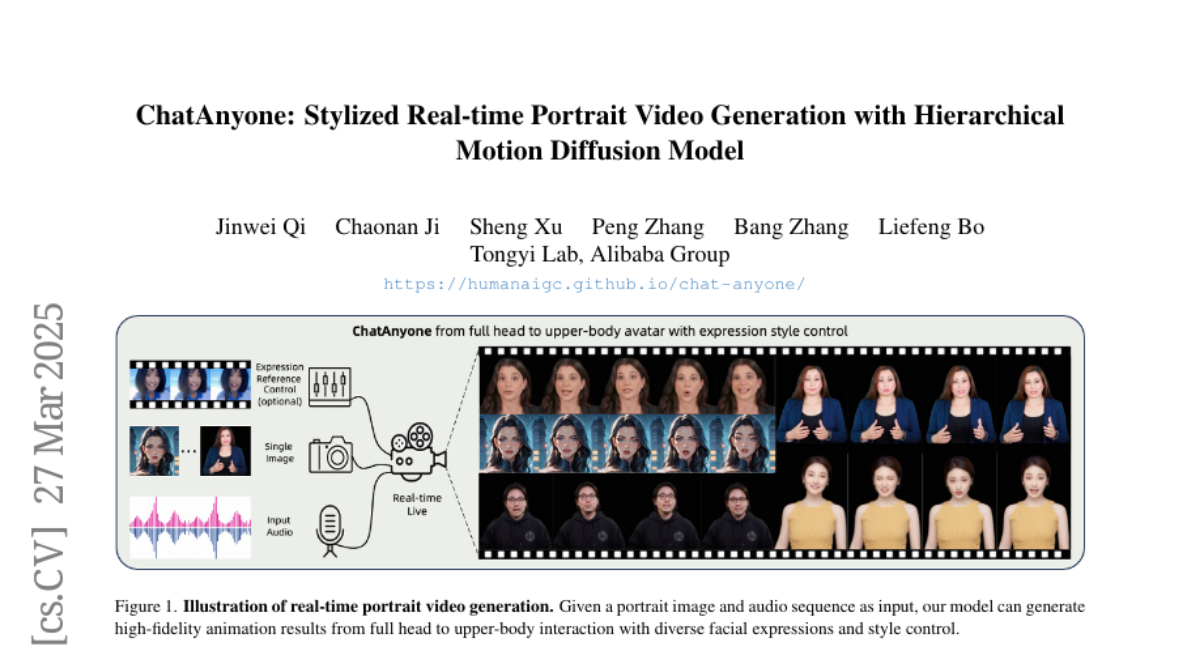

Real-time interactive video-chat portraits have been increasingly recognized as the future trend, particularly due to the remarkable progress made in text and voice chat technologies. However, existing methods primarily focus on real-time generation of head movements, but struggle to produce synchronized body motions that match these head actions. Additionally, achieving fine-grained control over the speaking style and nuances of facial expressions remains a challenge. To address these limitations, we introduce a novel framework for stylized real-time portrait video generation, enabling expressive and flexible video chat that extends from talking head to upper-body interaction. Our approach consists of the following two stages. The first stage involves efficient hierarchical motion diffusion models, that take both explicit and implicit motion representations into account based on audio inputs, which can generate a diverse range of facial expressions with stylistic control and synchronization between head and body movements. The second stage aims to generate portrait video featuring upper-body movements, including hand gestures. We inject explicit hand control signals into the generator to produce more detailed hand movements, and further perform face refinement to enhance the overall realism and expressiveness of the portrait video. Additionally, our approach supports efficient and continuous generation of upper-body portrait video in maximum 512 * 768 resolution at up to 30fps on 4090 GPU, supporting interactive video-chat in real-time. Experimental results demonstrate the capability of our approach to produce portrait videos with rich expressiveness and natural upper-body movements.