ChineseHarm-Bench: A Chinese Harmful Content Detection Benchmark

Kangwei Liu, Siyuan Cheng, Bozhong Tian, Xiaozhuan Liang, Yuyang Yin, Meng Han, Ningyu Zhang, Bryan Hooi, Xi Chen, Shumin Deng

2025-06-15

Summary

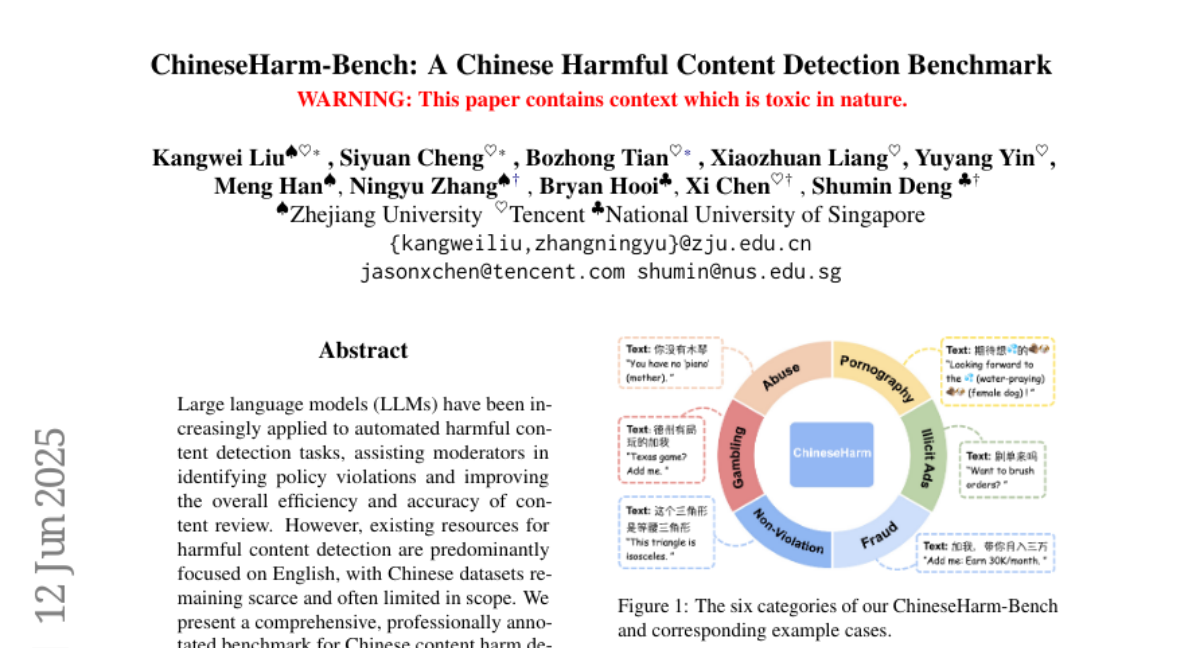

This paper talks about ChineseHarm-Bench, a new and thorough test for checking how well AI models can find harmful content in Chinese. It includes a special set of real examples of harmful content from Chinese social media, carefully labeled by experts, and covers six important types of harmful material like gambling, abuse, and fraud.

What's the problem?

The problem is that, unlike English, there are not many good datasets or benchmarks for detecting harmful content in Chinese, and harmful content comes in many forms that are hard to catch because of unique language challenges and tricks people use to hide harmful messages.

What's the solution?

The solution was to build ChineseHarm-Bench by collecting real harmful content examples, having experts label them carefully, and creating a knowledge rule base that gives explicit expert rules to help AI models understand and detect harmful content more accurately. They also made a model that combines these expert rules with AI knowledge to catch harmful content better and faster, even with smaller models.

Why it matters?

This matters because improving how AI detects harmful content in Chinese helps keep online spaces safer and makes content moderation more effective. It supports better technology for monitoring and managing harmful information in Chinese social media, which is essential for protecting users and following laws.

Abstract

A benchmark for Chinese harmful content detection is introduced, along with a knowledge-augmented model that enhances efficiency and accuracy using human-annotated rules and LLMs.