CINEMA: Coherent Multi-Subject Video Generation via MLLM-Based Guidance

Yufan Deng, Xun Guo, Yizhi Wang, Jacob Zhiyuan Fang, Angtian Wang, Shenghai Yuan, Yiding Yang, Bo Liu, Haibin Huang, Chongyang Ma

2025-03-14

Summary

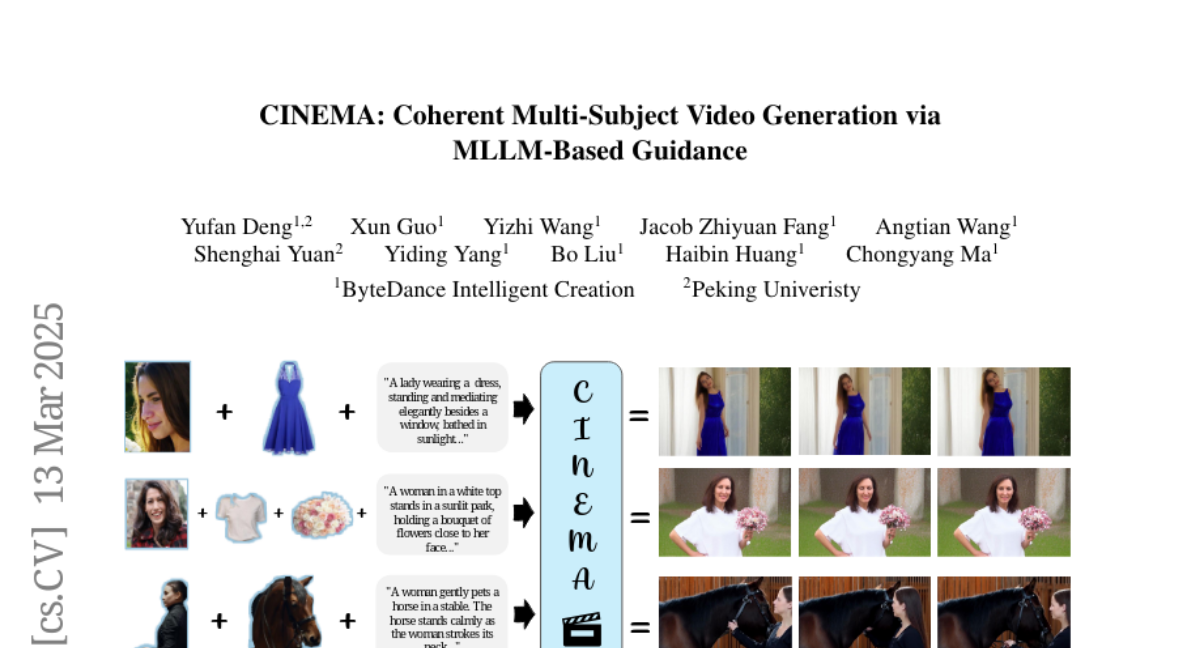

This paper introduces CINEMA, a new method for creating videos with multiple characters that maintains consistency and coherence, using a Multimodal Large Language Model (MLLM).

What's the problem?

Existing video generation models struggle with creating videos featuring multiple distinct characters while ensuring that these characters maintain consistent appearances and relationships throughout the video. Current methods rely on text prompts, which can be ambiguous and limit the ability to model subject interactions effectively.

What's the solution?

The researchers developed CINEMA, which uses an MLLM to understand the relationships between different characters in the video without needing explicit text descriptions. This approach allows for more scalable and flexible video generation, as it can handle varying numbers of characters and diverse datasets.

Why it matters?

This work matters because it improves the quality and coherence of multi-character video generation, opening up new possibilities for storytelling, interactive media, and personalized video content creation.

Abstract

Video generation has witnessed remarkable progress with the advent of deep generative models, particularly diffusion models. While existing methods excel in generating high-quality videos from text prompts or single images, personalized multi-subject video generation remains a largely unexplored challenge. This task involves synthesizing videos that incorporate multiple distinct subjects, each defined by separate reference images, while ensuring temporal and spatial consistency. Current approaches primarily rely on mapping subject images to keywords in text prompts, which introduces ambiguity and limits their ability to model subject relationships effectively. In this paper, we propose CINEMA, a novel framework for coherent multi-subject video generation by leveraging Multimodal Large Language Model (MLLM). Our approach eliminates the need for explicit correspondences between subject images and text entities, mitigating ambiguity and reducing annotation effort. By leveraging MLLM to interpret subject relationships, our method facilitates scalability, enabling the use of large and diverse datasets for training. Furthermore, our framework can be conditioned on varying numbers of subjects, offering greater flexibility in personalized content creation. Through extensive evaluations, we demonstrate that our approach significantly improves subject consistency, and overall video coherence, paving the way for advanced applications in storytelling, interactive media, and personalized video generation.